ICT Infrastructures - University of Pisa (Italy)

Since there is only little material on ICT Infrastructures course, this is a recap and summary of classes. The notes are a compilation of the course contents and focus on the topics in accordance with Prof. Antonio Cisternino's OneNote Notebook.

It is highly recommended to study with the EMC DELL slides provided under <<_Raccolta contenuto>> which will not be uploaded here for copyright reasons. Each heading correspond to a module. If you find any error please, fork and submit a pull request!

Table of contents

Click to show or hide

- Introduction

- Cloud Computing Reference Model [Module 2]

- Data centers

- Design and Architectures

- Fabric

- Disks and Storage

- Servers

- Cloud

- In class exercises

- Other questions

- About numbers

- Real Use Cases

- Open Source

- Books & Guides

- References

- Contributors

Introduction

The ICT world is changing (and will keep changing beyond the last time these notes were updated) and a lot of axioms about its infrastructures are becoming outdated. A couple of examples:

-

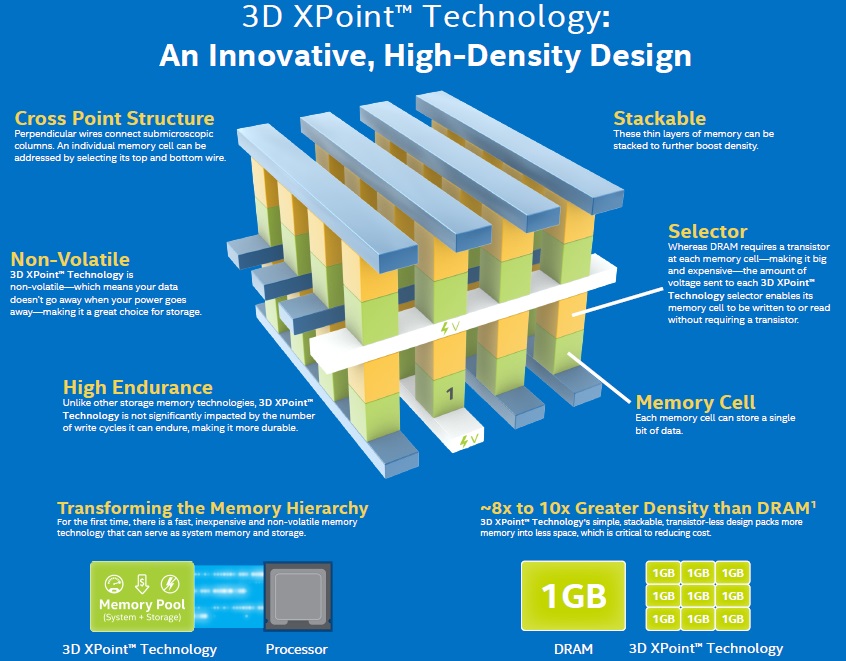

a few years ago it was known that the main bandwidth bottleneck was the disk, and so a system's whole performance was evaluated with reference to disk usage, number of IOs operations and so on... This, nowadays, is false. Just think of Intel Optane SSD where the new SSD technology based on 3D NAND permits to write and read faster than previous SSD, and so we have to redesign the entire system accordingly. Additionally, nvRAM (non-volatile RAM) are becoming more of an industry standard. nvRAM is a storage module similar to the hard drive but much faster.

-

In application and server distribution. In the past many applications were managed on each server with a shared storage. Nowadays we have deploy a large application on server clusters (i.e. a server node composed of multiple units working together as one) with local storage, so new system to develop and manage distributed computing application is needed (Hadoop, Cassandra, Spark...).

Cloud Computing Reference Model [Module 2]

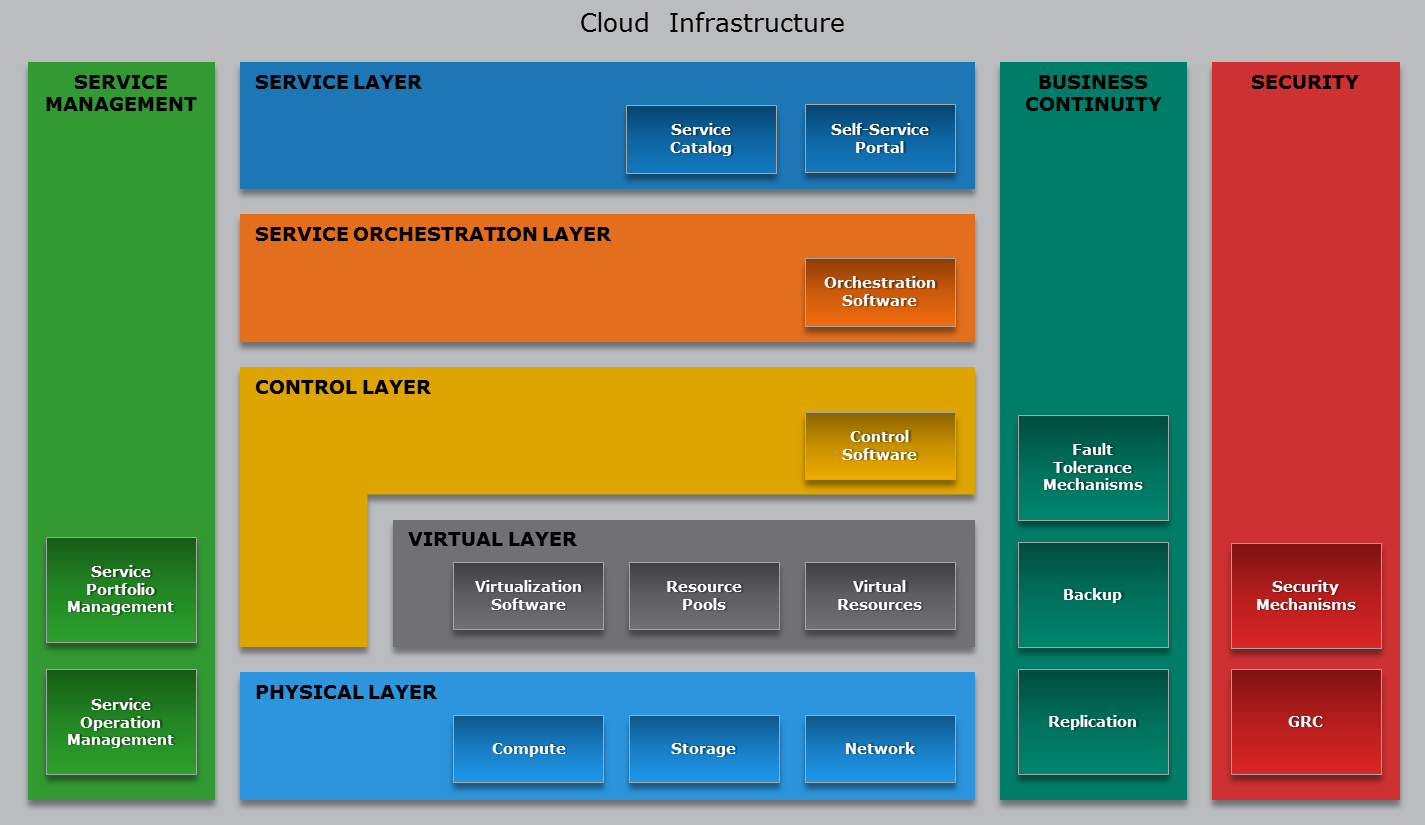

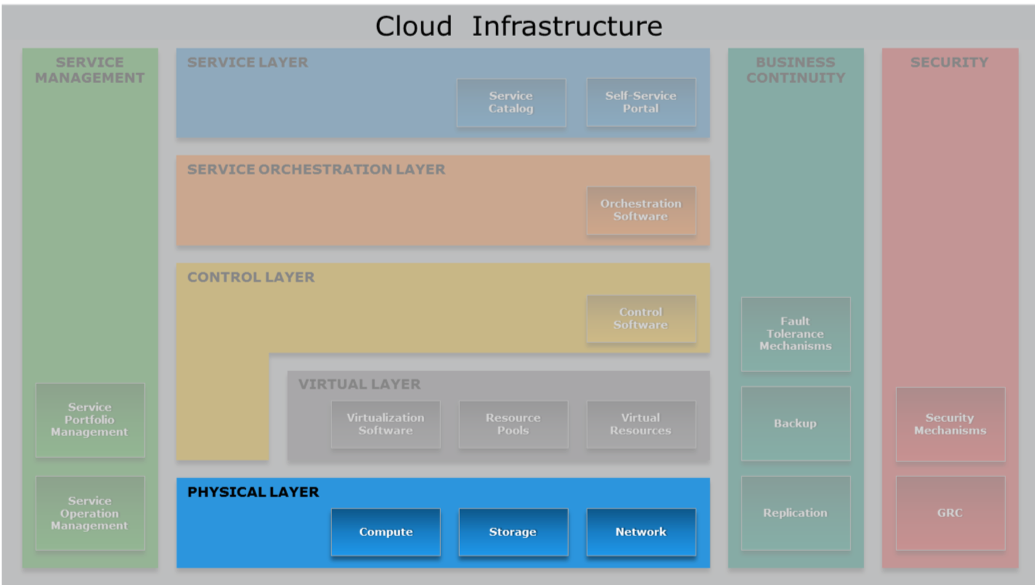

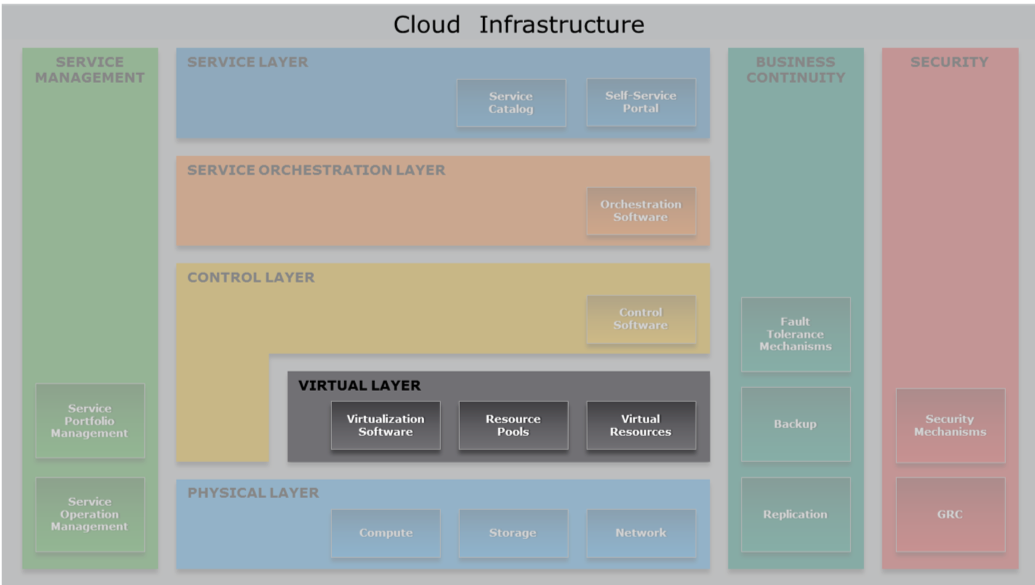

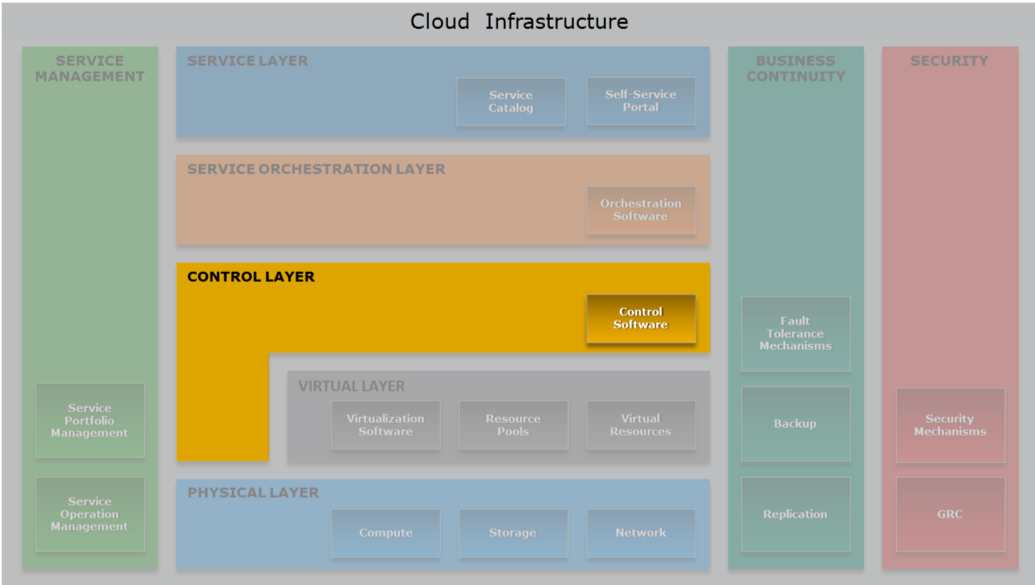

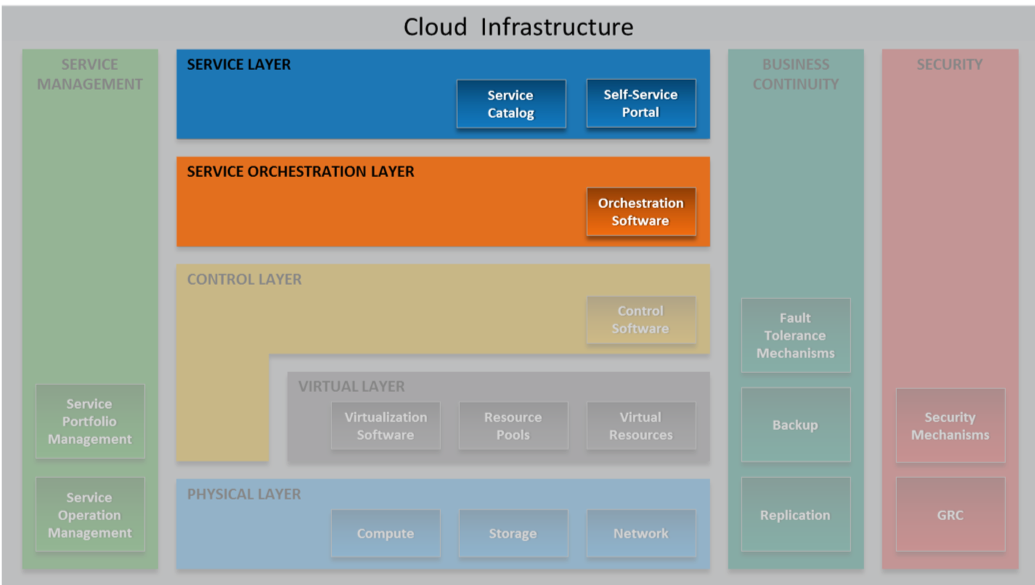

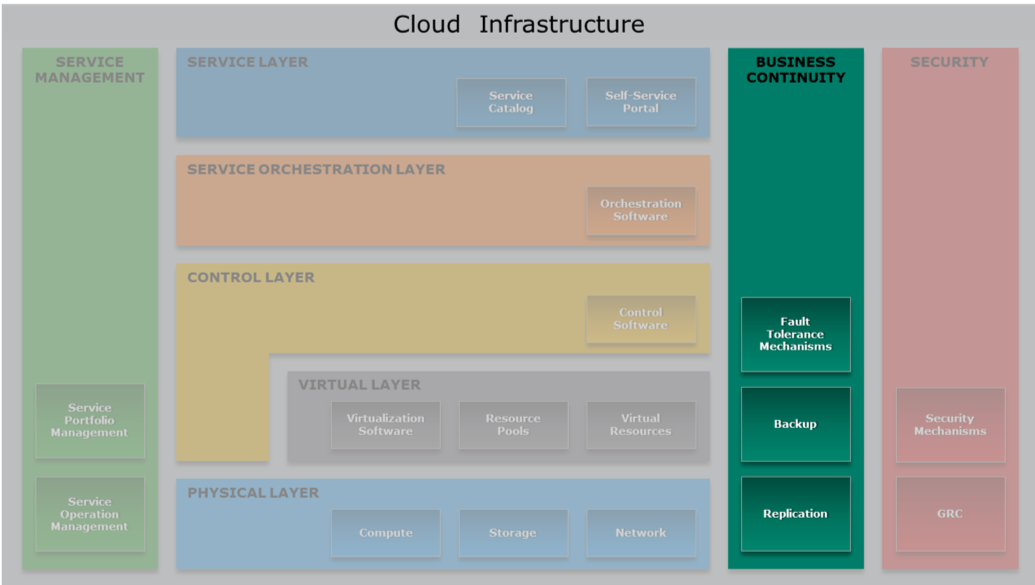

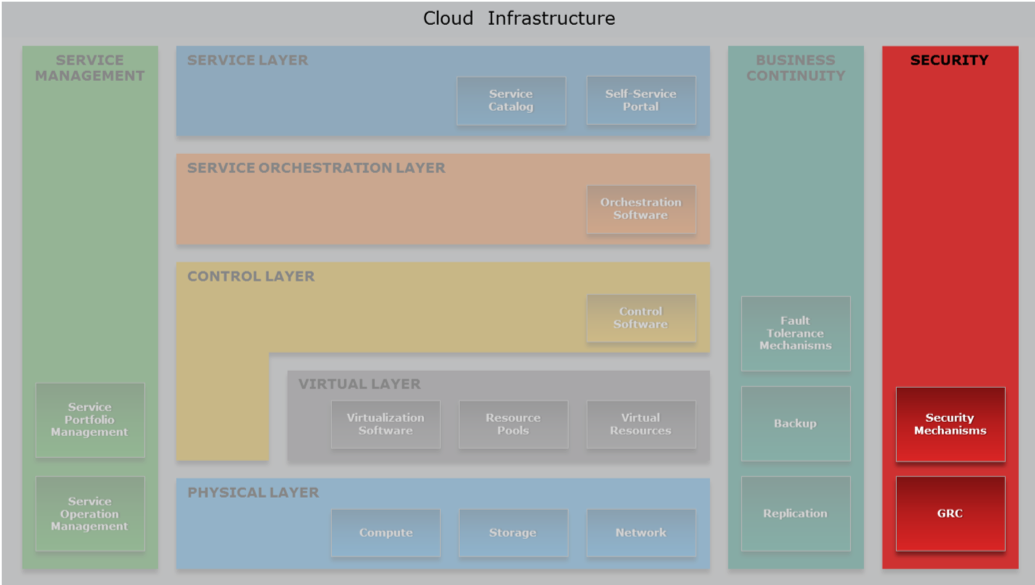

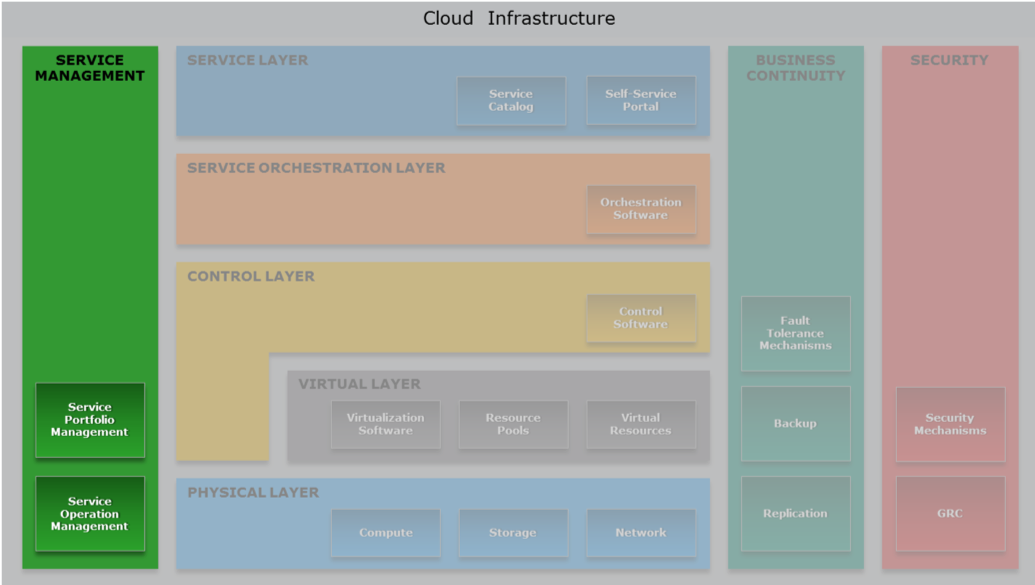

Since the course revolves around Cloud Computing architectures, it is important to keep the following reference model of the cloud stack in mind:

- Physical Layer [Module 3]: Foundation layer of the cloud infrastructure.

The physical infrastructure supporting the operation of the cloud - Virtual Layer [Module 4]: Abstracts physical resources and makes them appear as virtual resources. e.g. a physical server is partitioned into many virtual ones to use the hardware better. The High Performance Computing model bypasses the virtual layer for performance reasons.

- Control Layer [Module 5]: Dynamic Resource configuration and allocation.

- Orchestration Layer [Module 6]: workflows for task automation.

- Service Layer [Module 6]: self-service portal/interface and service catalog. Allows cloud users to obtain the resources they need without knowing where they are allocated.

- Service Management [Module 9]: on operational and business level

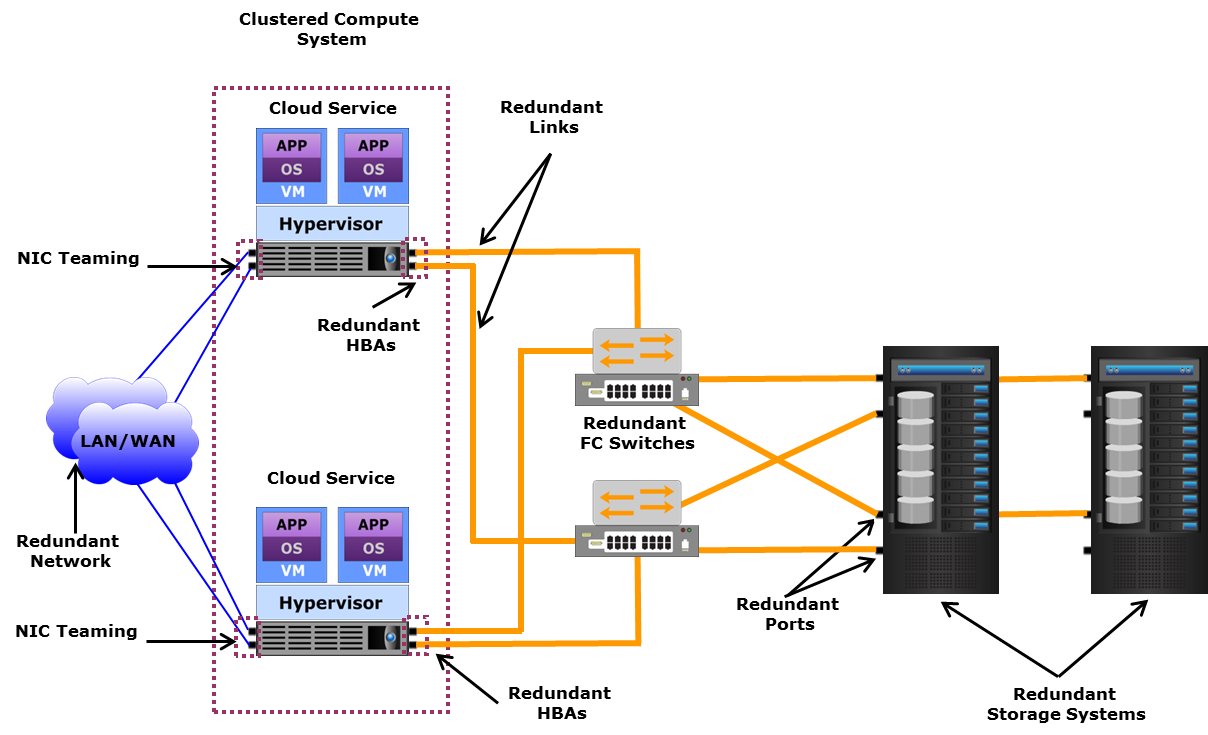

- Business Continuity [Module 7]: Enables ensuring the availability of services in line with SLAs.

e.g. Backups vs Replicas: doing a backup of 1 PB may be a problem.

Fault Tolerance: I should be able to power off a server without anyone noticing it.

live migration: upgrading the software or the firmware while the system is running. - Security [Module 8]: Governance, Risk and compliance. Also things like GDPR, phishing, antivirus, firewalls and DoS Attacks..

Data centers

We start the course with datacenter design, see how it is built to support current and future design considerations, scalability, etc.

A data center is a facility used to house computer systems and associated components, such as telecommunications and storage systems. It generally includes redundant or backup components and infrastructure for power supply, data communications connections, environmental controls (e.g. air conditioning, fire suppression) and various security devices. A large data center is an industrial-scale operation using as much electricity as a small town.

On average there are only 6 person managing 1 million servers.

Prefabricated group of racks, already cabled and cooled, are automatically inserted in the datacenter (POD - Point Of Delivery). If something is not working in the prefabricated, the specific server is shut down. If more than the 70% is not working the POD producer will simply change the entire unity.

The datacenter is a place where we concentrate IT system in order to reduce costs. Servers are demanding in terms of current, cooling and security.

Design and Architectures

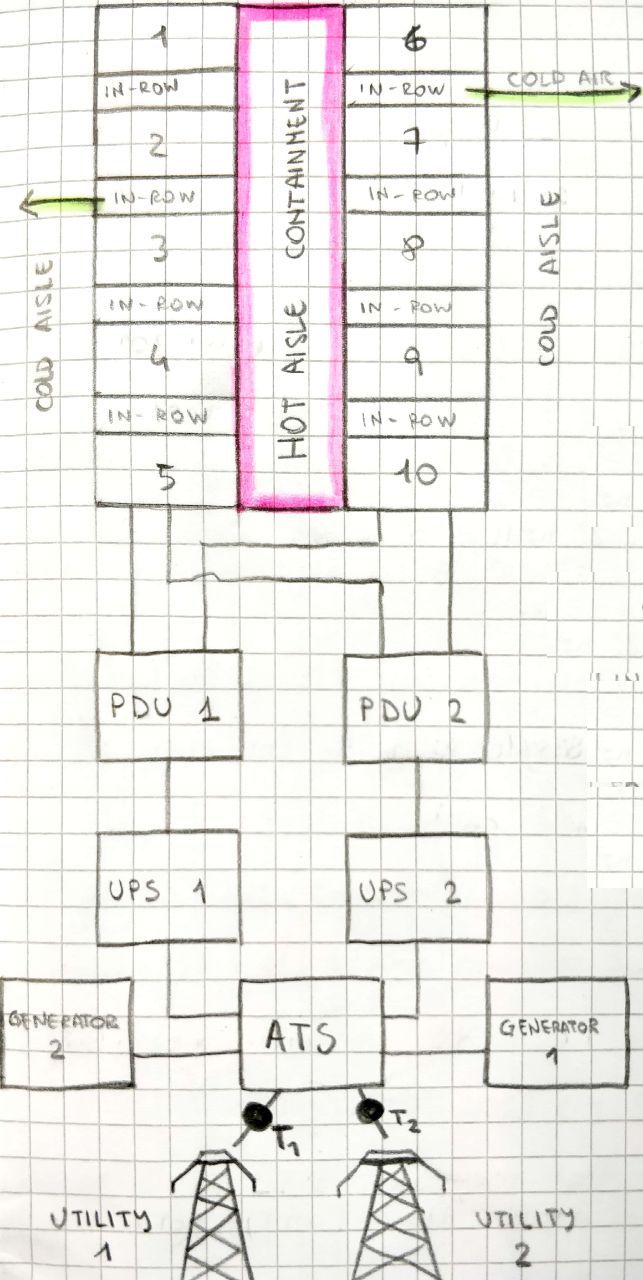

Cooling

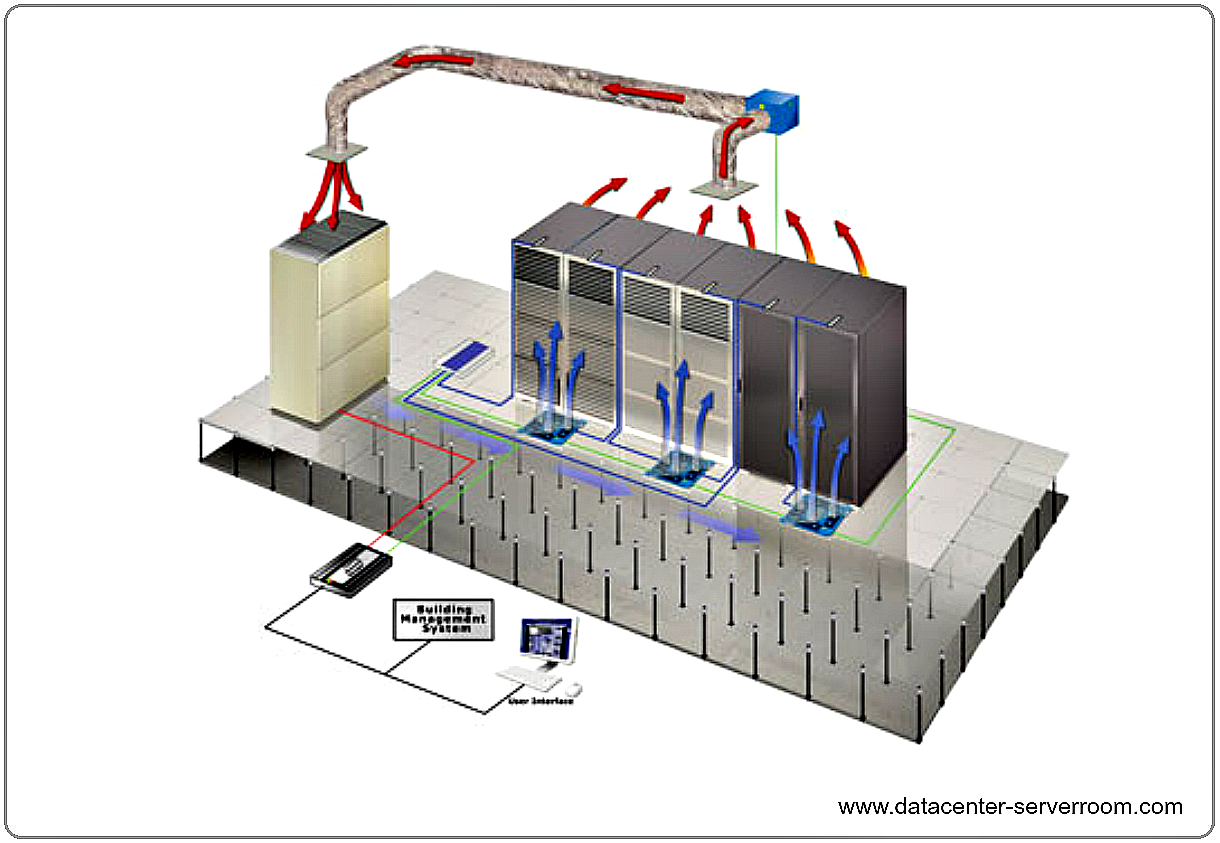

Today cooling is air based. Just the beginning for liquid cooling.

The air pushed though the server gets a 10/15 degrees temperature augment.

CRAC: Computer Room Air Conditioner

Popular in the '90 (3-5KW/rack), but not very efficient in terms of energy consumption.

There is a floating floor, under which all the cabling and the cooling is performed. The air goes up because of thermal convection where it gets caught, cooled and re-introduced.

Drawbacks are density (if we want to go dense this approach fails) and the absence of locality. No one is using this technique today.

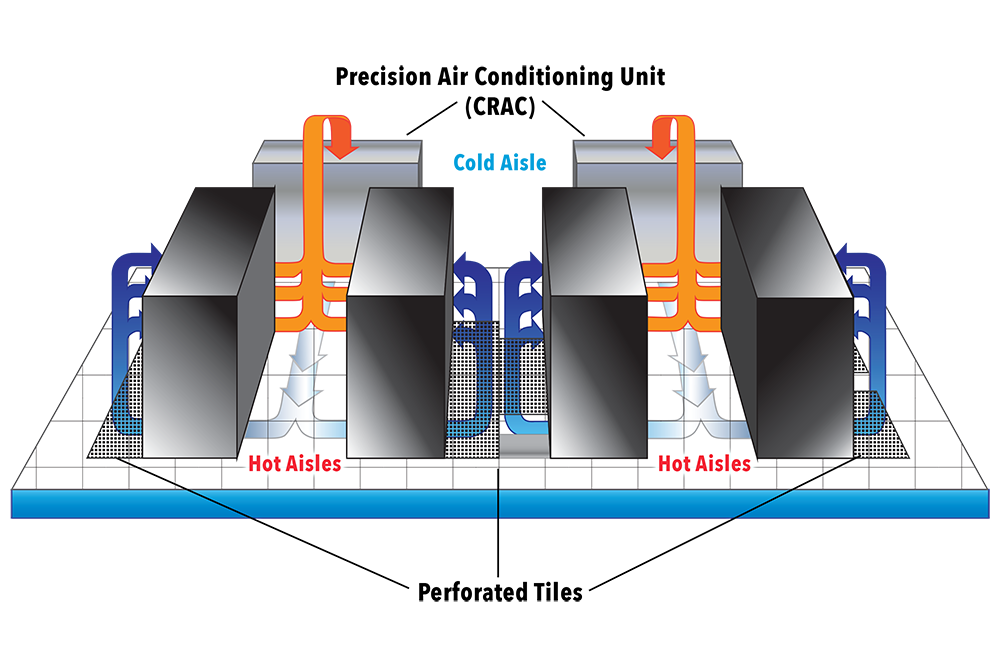

Hot/Cold aisles

The building block of this architecture are hot and cold corridors, with servers front-to-front and back-to-back; that optimize cooling efficiency.

The workload balancing may be a problem: there can be the situation where a rack is hotter than the other depending on the workload, thus is difficult to module the amount of hot and cold air. In the CRAC model the solution is pumping enough for the higher consumer, but is not possible to act only where needed. That leads waste of energy. This problem is not present in the in-row cooling technology.

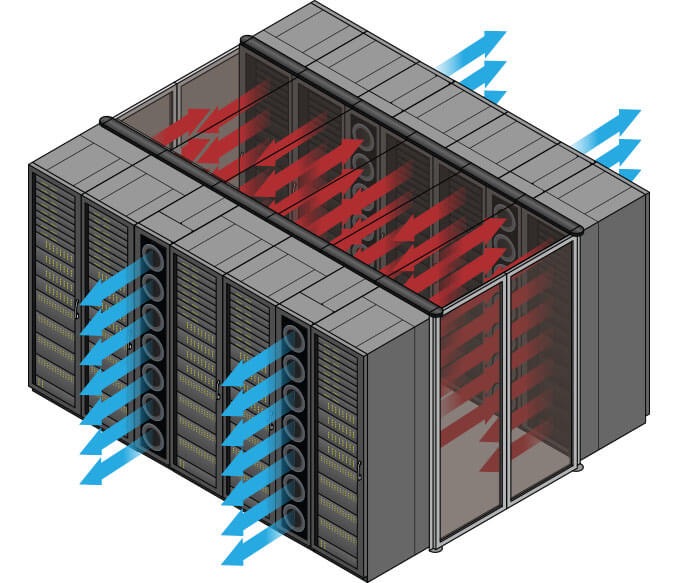

In-Row cooling

In-row cooling technology is a type of air conditioning system commonly used in data centers (15-60 kW/rack) in which the cooling unit is placed between the server cabinets in a row for offering cool air to the server equipment more effectively.

In-row cooling systems use a horizontal airflow pattern utilizing hot aisle/cold aisle configurations and they only occupy one-half rack of row space without any additional side clearance space. Typically, each unit is about 12 inches wide by 42 inches deep.

These units may be a supplement to raised-floor cooling (creating a plenum to distribute conditioned air) or may be the primary cooling source on a slab floor.

The in-row cooling unit draws warm exhaust air directly from the hot aisle, cools it and distributes it to the cold aisle. This ensures that inlet temperatures are steady for precise operation. Coupling the air conditioning with the heat source produces an efficient direct return air path; this is called close coupled cooling, which also lowers the fan energy required. In-row cooling also prevents the mixing of hot and cold air, thus increasing efficiency.

It's possible to give more cooling to a single rack, modulating the air needed. In front of the rack there are temperature and humidity sensors. Humidity should be avoided because can condensate because of the temperature differences and therefore conduct electricity.

There are systems collecting data from the sensors and adjusting the fans. The racks are covered to separate cool air and hot air. It's also possible to optimize the datacenter cooling according to the temperature changes of the region where the datacenter is and apply "static analysis" to the datacenter location, in order to optimize resource consumption according to temperature changes. Programs are available in order to simulate airflows in datacenter in order to optimize the fans.

Usually every 2 racks (each 70 cm) there should be a cooling row (30 cm).

Liquid cooling

It's also called CoolIT, consists in making the water flow directly onto the CPUs.

Having water in a data center is a risky business, but this solution lowers the temperature for ~40%. One way of chilling the water could be pushing it down to the ground. Water Distribution System, like the Power Distribution System.

A lot of research has been lately invested towards oil cooling computers, particularly in the contest of High Performance Computing. This is a more secure solution because the mineral oil is not a conductor and allows to immerse everything in the oil, in order to maximize the effectiveness of the cooling. The problem of this technique is that the cables slowly pump the oil out.

Other ideas

A typical approach to cool the air is to place chillers outside the building, or by trying geocooling, which revolves around using the cold air in depth. The main idea is to make a deep hole in the ground, and make the cables pass through it.

Current

A 32KW datacenter is small (also if it consumes the same amount of current of 10 apartments).

For efficiency reasons, Datacenters use Direct current (DC) insted of Alternate current (AC): A DC power architecture contains less components, which means less heat production, hence less energy loss. However, nowadays current is transported via AC, so it is required a conversion to DC using Direct Current Transformers. The Industrial current has 380 Volts in 3 phases. Also, note that Direct current is more dangerous than Alternating current.

Where is the heat dissemination happening from conversion of AC into DC current, and it is a number <= 1.

It gives the efficiency of the transformation and generally it changes according to the amount of current needed (idle vs under pressure).

For example an idle server with 2 CPUs (14 cores each) consumes 140 Watts.

Power Distribution

The amount of current allowed in a data center are the Ampere on the PDU (Power Distribution Unit).

There are one or more lines (for reliability and fault tolerance reasons) coming from different generators to the datacenter (i.e. each line 80 KW , 200 A more or less. Can use it for 6 racks 32A / rack. Maybe I will not use the whole 32 A so I can put more racks).

The lines are attached to an UPS (Uninterruptible Power Supply/Source). It is a rack or half a rack with batteries (not enough to keep-on the servers) that in some cases can power the DC for ~20 minutes. Them are also used to prevent current oscillation. There are a Control Panel and a Generator. When the power lines fail the UPS is active between their failure and the starting of the generator and ensure a smooth transition during the energy source switching. The energy that arrives to the UPS should be divided among the servers and the switches.

The UPS is attached to the PDU (Power Distribution Unit) which is linked to the server. For redundancy reasons, a server is powered by a pair of lines, that usually are attached to two different PDU. The server uses both the lines, so that there will be continuity in case of failure of a line. In the server there are the power plugs in a row that can monitored via a web server running on the rack PDU.

Example of rack PDU: 2 banks, 12 plugs each, 16 A each bank, 15 KW per rack, 42 servers per rack.

Power factor

Click to expand

Alternating current (AC) supplies our buildings and equipment. AC is more efficient for power companies to deliver, but when it hits the equipment's transformers, it exhibits a characteristic known as reactance.

Reactance reduces the useful power (watts) available from the apparent power (volt-amperes). The ratio of these two numbers is called the power factor (PF). Therefore, the actual power formula for AC circuits is watts = volts x amps x power factor. Unfortunately, the PF is rarely stated for most equipment, but it is always a number of 1.0 or less, and about the only thing with a 1.0 PF is a light bulb.

For years, large UPS systems were designed based on a PF of 0.8, which meant that a 100 kVA UPS would only support 80 kW of real power load.

The majority of large, commercial UPS systems are now designed with a PF of 0.9. This recognizes that most of today's computing technology presents a PF of between 0.95 and 0.98 to the UPS. Some UPS systems are even designed with PFs of 1.0, which means the kVA and kW ratings are identical (100 kVA = 100 kW). However, since the IT load never presents a 1.0 PF, for these UPS systems, the actual load limit will be the kVA rating.

Use the hardware manufacturers' online configurations if possible. As a last resort, use the server's power supply rating -- a server with a 300-Watt power supply can never draw 800 Watts. Size the power systems based on real demand loads.

Dual-corded equipment adds redundancy to IT hardware, and the lines share power load. If a dual-corded server has two 300-Watt power supplies, it can still draw no more than 300 Watts in your power design, because each power supply has to be able to handle the server's full load (not including power supply efficiency calculations).

The other way to estimate total server power consumption is to use industry norms. Unless you're hosting high performance computing, you can probably figure groupings in three levels of density: Low density cabinets run 3.5 to 5 kW; medium density run 5 to 10 kW; high density run 10 to 15 kW. The amount of each rack type to allocate depends on your operation. Generally, data centers operate with about 50% low density cabinets, 35% medium and 15% high density.

If your projected average is more than 1.5 times your existing average, take a closer look at the numbers. This result is fine if you expect a significant density increase, due to new business requirements or increased virtualization onto blade servers. But if there's no apparent reason for such a density growth, re-examine your assumptions.

PUE: Power Usage Effectiveness

PUE is a ratio that describes how efficiently a computer data center uses energy; specifically, how much energy is used by the computing equipment (in contrast to cooling and other overhead).

PUE is the ratio of total amount of energy used by a computer data center facility to the energy delivered to computing equipment. PUE is the inverse of data center infrastructure efficiency (DCIE).

As example, consider that the PUE of the university's datacenter during 2018 is less 1.2, while the average italian data center's PUE are around 2-2.5.

If the PUE is equal to 2 means that for each Watt used for computing, 1 Watt is used for cooling.

Fabric

The fabric is the interconnection between nodes inside a datacenter. We can think this level as a bunch of switch and wires.

We refer to North-South traffic indicating the traffic outgoing and incoming to the datacenter (internet), while we refer to East-West as the internal traffic between servers.

Ethernet

The connection can be performed with various technologies, the most famous is Ethernet, commonly used in Local Area Networks (LAN) and Wide Area Networks (WAN). Ethernet use twisted pair and optic fiber links. Ethernet as some famous features such as 48-bit MAC address and Ethernet frame format that influenced other networking protocols.

MTU (Maximum Transfer Unit) up to 9 KB with the so called Jumbo Frames.

On top of ethernet there are TCP/IP protocols (this is a standard), they introduce about 70-100 micro sec of latency.

The disadvantage of Ethernet is the low reliability.

Infiniband

Even if Ethernet is so famous, there are other standard to communicate. InfiniBand (IB), by Mellanox, is another standard used in high-performance computing (HPC) that features very high throughput and very low latency (about 2 microseconds). InfiniBand is a protocol and a physical infrastructure and it can send up to 2GB messages with 16 priorities level.

The RFC 4391 specifies a method for encapsulating and transmitting IPv4/IPv6 and Address Resolution Protocol (ARP) packets over InfiniBand (IB).

InfiniBand transmits data in packets up to 4KB. A massage can be:

- a remote direct memory access read from or write to a remote node (RDMA)

- a channel send or receive

- a transaction-based operation (that can be reversed)

- a multicast transmission

- an atomic operation

Pros:

- no retransmissions

- QoS, traffic preserved, reliable

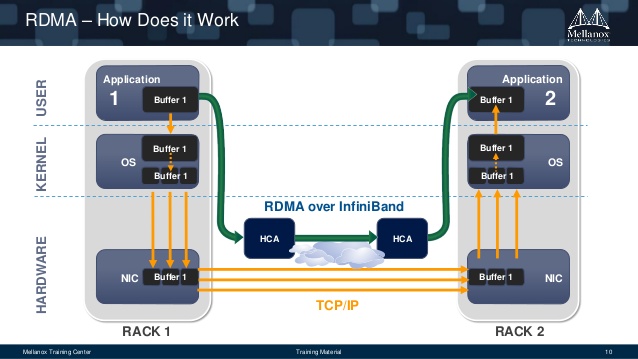

RDMA: Remote Direct Memory Access

Access, a direct memory access (really!) from one computer into that of another without involving either one's OS and bypassing the CPU. This permits high-throughput and low-latency networking performing. RDMA can gain this features because is not a protocol, but is on API, hence there is no overhead.

RDMA supports zero-copy networking by enabling the network adapter to transfer data directly to or from application memory, eliminating the need to copy data between application memory and the data buffers in the operating system, and by bypassing TCP/IP. Such transfers require no work to be done by CPUs, caches, or context switches, and transfers continue in parallel with other system operations. When an application performs an RDMA Read or Write request, the application data is delivered directly to the network, reducing latency and enabling fast message transfer. The main use case is distributed storage.

Omni-Path

Moreover, another communication architecture that exist and is interested to see is Omni-Path. This architecture is owned by Intel and performs high-performance communication(Ompni-Path Wikipedia).

The interest of this architecture is that Intel plans to develop technology based on that will serve as the on-ramp to exascale computing (a computing system capable of the least one exaFLOPS).

Connectors & plugs

Now we try to analyse the problem from the connector point of view. The fastest wire technology available is the optic fiber. It can be divided into two categories:

- mono-modal (1250 nm): expensive, lower loss, covers distances up to 60KM. Used in WAN/MAN

- multi-modal (850 nm): cheap, covers distances up to 2KM. Used in datacenters

They also have different transceiver. There are two kind of connectors:

- LC: ok for datacenters

- SC: usually used in metropolitan areas because it has a better signal propagation

There can be a cable with a LC in one side and a SC on the other side.

Of course, a wire is a wire, and we need something to connect it to somewhere (transceiver):

- SPF (Small form-factor pluggable), a compact, hot-pluggable optical module transceiver

- 1 Gbps

- SFP+, can be combined with some other SFP+

- 10 Gbps

- QSFP (Quad SPF)

- 4x10 Gbps (if combined with SPF+)

- SFP28, where the number 28 is the number of pins

- 25 GBps

- QSFP28 (Quad SPF28)

- 4x25 Gbps (if combined with SFP28)

- RJ45, in datacenters there are almost no installations of it

- 10/100 Mbps, 1/2.5/5 Gbps.

- Different cables have categories (cat4, cat5, cat6)

- 2.5/5 Gbps are new standards working on cat5 and cat6 cables respectively, in order to deliver more bandwidth to the WiFi access point.

| RJ45 | SPF+ | QSPF+ transceiver module | LC connector |

|---|---|---|---|

|

|

|

|

Nowadays we have:

- 25 Gbps

- 50 Gbps (2 * 25)

- 100 Gbps (4 * 25)

The transceiver module can serve copper or optical fiber; it has a microchip inside and is not cheap.

Software Defined Approach

The Software Defined Approach, where approach is Networking (SDN) or Storage (SDS), is a novel approach to cloud computing.

Software-defined approach abstracts all the infrastructure components (compute, storage, and network), and pools them into aggregated capacity. It separates the control or management functions from the underlying components to the external software, which takes over the control operations to manage the multi-vendor infrastructure components centrally.

This decoupling enable to centralize all data provisioning and management tasks through software, external to the infrastructure components.

The software runs on a centralized compute system or a standalone device, called the software-defined controller.

Benefits of software-defined approach:

- Improves business agility: minimizes resource provisioning time to get new services up and running

- Provides cost efficiency: enables to effectively use the existing infrastructure and low-cost commodity hardware to lower CAPEX

- Enables to achieve scale-out architecture

- Provides a central point of access to all management functions

SDN: Software Defined Networking

SDN is an architecture purposing to be dynamic, manageablea and cost-effective (SDN Wikipedia). This type of software create a virtual network to manage the network with more simplicity.

The main concept are the following:

- Network control is directly programmable (also from remote)

- The infrastructure is agile, since it can be dynamically adjustable

- It is programmatically configured and is managed by a software-based SDN controller

- It is Open Standard-based and Vendor-neutral

There is a flow table in the switches that remembers the connection. The routing policies are adopted according to this table.

Deep packet inspection made by a level 7 firewall. The firewall validates the flow and if it's aware that the flow needs bandwidth, the firewall allows it to bypass the redirection (of the firewall).

Software-defined data center

Software-defined data center is a sort of upgrade of the previous term and indicate a series of virtualization concepts such as abstraction, pooling and automation to all data center resources and services to achieve IT as a service.

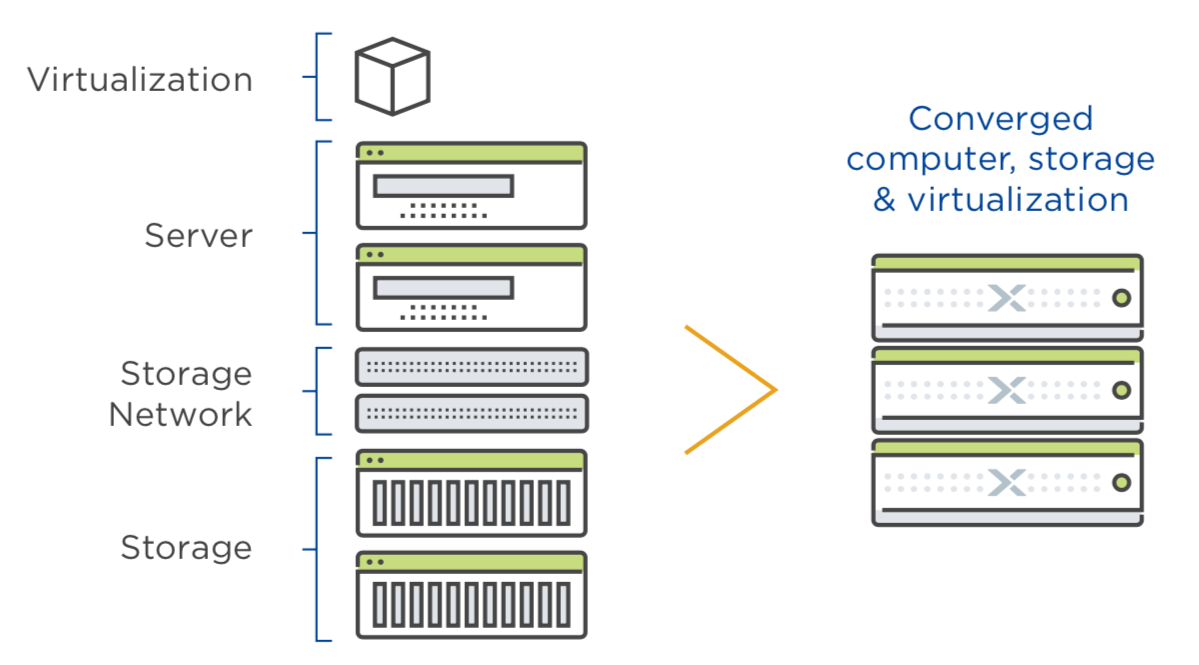

Hyper-convergence

So we virtualize the networking, the storage, the data center... and the cloud! Some tools, as Nutanix build the hyper-converged infrastructure HCI technology.

Hyper-converged infrastructure combines common datacenter hardware using locally attached storage resources with intelligent software to create flexible building blocks that replace legacy infrastructure consisting of separate servers, storage networks, and storage arrays.

Network topologies

A way of cabling allowing multiple computers to communicate. It's not necessary a graph,but for the reliability purpose it often realized as a set of connected nodes. At least 10% of nodes should be connected in order to guarantee a sufficient reliability (Small World Theory).

At layer 2 there is no routing table (broadcast domain), even if there are some cache mechanism. The topology is more like a tree than a graph because some edges can be cutted preserving reachability and lowering the costs. In the layer 2 topology computers talk each other, for that reason there is no scalability.

The layer 2 topology is widely used for broadcasting.

At layer 3 there are routing tables, them are keep updated by a third part, the router. The L3 topology is the mainly used for point-to-point communication.

In switches there are routing tables but them are used just for cache, switches working also without routing tables.

Introduction

Small-world theory

This theory, formulated by Watts and Strogatz, claims that 6 hops connect us with every person in the world.

According to their studies, taken two people x and y respectively strangers, x can send a message to y just asking to his acquaintances to pass the message to someone closer to y. Hop by hop, the message reaches y going only through friends of friends. On average, this operation needs only 6 steps.

For this reason, a good network topology should take 6 hops on average to connect 2 machines.

Actually, topologically we got more than 6 hops, but adding 10% of random links across the graph the hops number easly collapse to 6.

Spanning Tree Protocol (STP)

First of all it is necessary to understand the loop problem. A loop is a cycle of the links between various nodes which creates a "DDoS-like" situation by flooding the network.

The spanning Tree Protocol is a network protocol that builds a logical loop-free topology for Ethernet networks. Taken a node as root, it builds a spanning tree from the existing topology graph, and disables all the arch that are not in use. The graph is now totally converted into a tree.

In networking the spanning tree is built using some Bridge Protocol Data Units (BPDUs) packages.

In 2001 the IEEE introduced Rapid Spanning Tree Protocol (RSTP) that provides significantly faster spanning tree convergence after a topology change.

The advantage of the Spanning Tree protocol is that unplugging a link the network will autofix in less than a minute, rebuilding a new tree with the edges previously discarded. However, nowadays it is used only in campus and not in datacenters, due to its high latency of convergence (up to 10-15 seconds to activate a backup line) that is not sufficient for an always-on system.

Network Chassis

The Network Chassis is a sort of big modular and resilient switch. At the bottom it has a pair of power plugs and then it's made of modular line cards (with some kind of ports) and a pair of RPM Routing Processing Modules (for redundancy) to ensure that the line cards work. The chassis can be over provisioned to resist to aging but it has a limit.

Pros

- resilient

- 1 CLI per switch

- expandable

Cons

- expensive

- not entirely future proof (today some switches may need up to 1KW power supply, while years ago they needed only 200 W)

- aging problem

The chassis is connected with the rack's tor and bor (top/bottom of rack) switches via a double link.

Stacking

Some network switches have the ability to be connected to other switches and operate together as a single unit. These configurations are called stacks, and are useful for quickly increasing the capacity of a network.

It's cheaper than the chassis but there is less redundancy and it is not upgradable without connectivity.

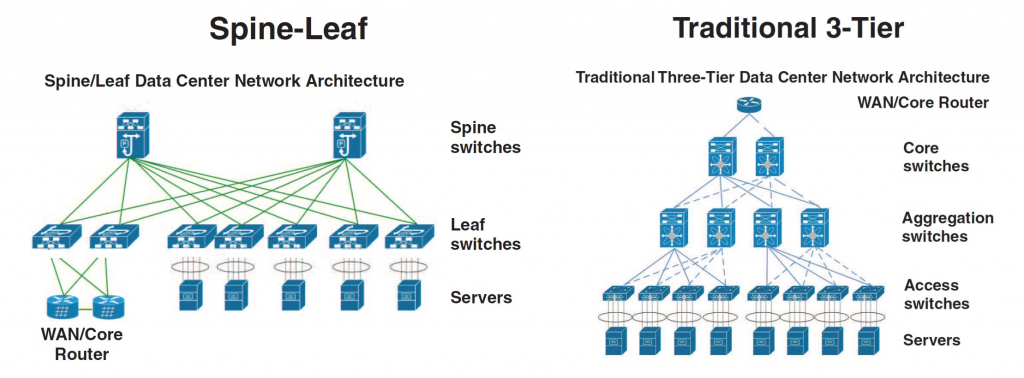

Three-tier design

Simple architecture constisting of core, aggregation and access switches connected in a hierarchy through pathways. Possible loops in those paths are prevented using the Spanning Tree Protocol, which also provides active-passive redundancy: indeed the STP tree keeps only a set of active nodes.

However, this type of redundancy leads to inefficient east-west traffic, because devices connected to the same switch port may contend for bandwidth. Moreover, communication server-to-server might requires long crossings between layers, causing latency and traffic bottlenecks. Hence, the Three-tier design is not good for virtualization, because VMs should be able to freely move between servers without compromises

Spine and leaf Architecture

Architecture suitable for large datacenters and cloud networks due to its scalability, reliability and better performance. It consists of two layers: the spine layer, which is made of switches that perform routing and that work as the backbone of the network, and the leaf layer, which is made of switches that connect to endpoints such as servers, storage devices, firewalls, load balancers and edge routers. Every leaf switch is interconnected to every spine switch of the network fabric. Using this topology, any server can communicate with any other server with no more than one interconnection switch path between any two leaf switches.

It is highly scalable: if the bandwidth is not enough, simply add an additional spine switch and connect it to all the leaf switches (it also reduces oversubscription, which is described next section); if the ports are not enough, simply add a new leaf switch and connect it to all the spine switches

Loops are prevented using the Link Aggregation Control Protocol (LACP): it aggregates two different physical links between two devices into a logical point-to-point link. That means that both links can be used to communicate, increasing the bandwidth and gaining active-active redundancy in case of failure of a link (ensuring no loops because each link is a single channel). Hence, the leaf-spine design provides a more stable and reliable network performance.

Usually in a spine and leaf architecture the NS traffic, that connect the datacenter to Internet, is slow and the EW traffic, that is server-to-server and rack-to-rack, is very intensive.

Characteristics:

- fixed form factor (non modular switches)

- active-active redundancy

- loop aware topology (a tree topology with no links disabled for redundancy reasons).

- interconnect using standard cables (decide how many links use to interconnect spines with leaves and how many others link to racks).

With this architecture it's possible to turn off one switch, upgrade it and reboot it without compromising the network. Half of the bandwidth is lost in the process, but the twin switch keeps the connection alive.

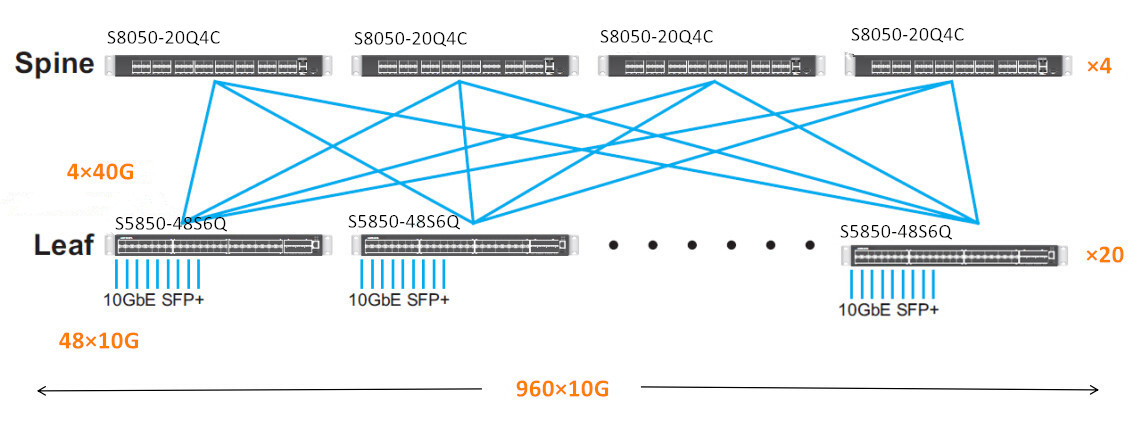

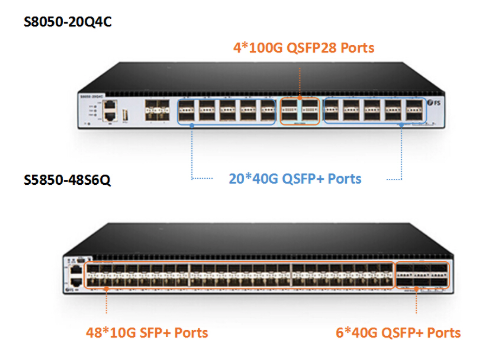

A typical configuration of the ports and bandwidth of the leaves is:

- 1/3 going upwards and 2/3 going downwards

- 48 ports 10 Gbps each (downward - from leaves to racks)

- plus 6 ports 40 Gbps each (upward - from leaves to spines)

- or 48 ports 25 each (downward)

- plus 6 ports 100 each (upward)

Just a small remark: with spine and leaf we introduce more hops, so more latency, than the chassis approach. The solution for this problem is using as a base of the spine a huge switch (256 ports) which actually acts as a chassis, in order to reduce the number of hops and latency.

Oversubscription

It is the practice of connecting multiple devices to the same switch port to optimize use. For example, it is particulary useful to connect multiple slower devices to a single port to take advantage of the unused capacity of the port and improve its utilization. However, devices and applications that require high bandwidth should generally connect with a switch port 1-on-1, because multiple devices connected to the same switch port may contend for that port's bandwidth, resulting in poor response time. Hence, significant increases in the use of multi-core CPUs, server virtualization, flash storage, Big Data and cloud computing have driven the requirement for modern networks to have lower oversubscription. For this reason, it is important to keep in mind the oversubscription ratio, when designing your fabric.

In a leaf-spine design, this oversubscription is measured as the ratio of downlink ports (to servers/storage) to uplink ports (to spine switches). Current modern network designs have oversubscription ratios of 3:1 or less. For example, if you have 20 servers each connected with 10Gbps downlinks (leaft switches - servers) and 4 10Gbps uplinks (leaf switches - spine switches), you have a 5:1 oversubscription ratio (200Gbps/40Gbps).

Is it possible to achieve a degree of oversubscription equal to 1?

Yes, and it is possible by just linking half the ports upwards and half down. This is the basis of the full fat tree.

Some considerations about numbers

Click to show or hide

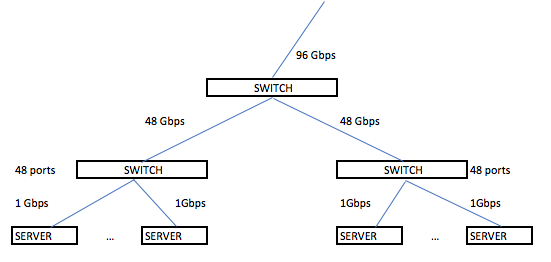

Start think about real world. We have some server with 1 Gbps (not so high speed, just think that is the speed you can reach with your laptop attaching a cable that is in classroom in the university). We have to connect this servers to each other, using switches (each of them has 48 ports). We have a lots of servers... The computation is done.

As we see we need a lots of bandwidth to manage a lots of service and even if the north-south traffic (the traffic that goes outside from our datacenter) can be relatively small (the university connection exits on the world with 40 Gbps), the east-west traffic (the traffic inside the datacenter) can reach a very huge number of Gbps. Aruba datacenter (called IT1) with another Aruba datacenter (IT2) reach a bandwidth of 82 Gbps of Internet connection.

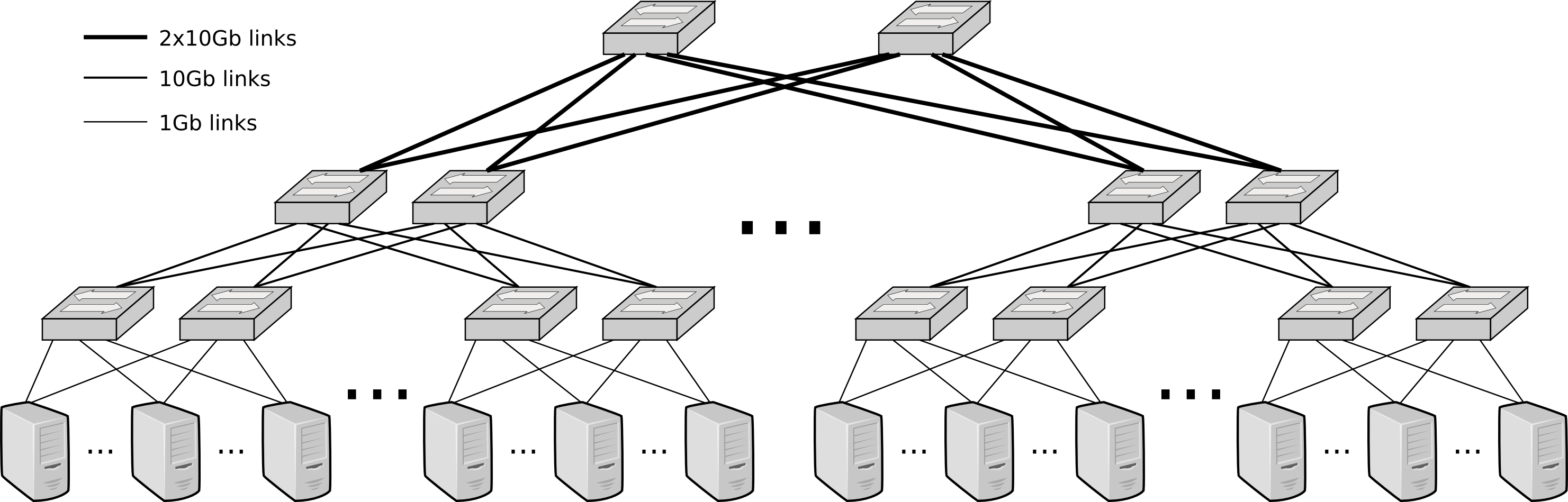

Full Fat Tree

In this network topology, the link that are nearer the top of the hierarchy are "fatter" (thicker, which means high-bandwidth) than the link further down the hierarchy. Used only in high performance computing where performances have priority over budgets.

The full fat tree resolves the problem of over-subscription. Adopting the spine and leaf there is the risk that the links closer to the spines can't sustain the traffic coming from all the links going from the servers to the leaves. The full fat tree is a way to build a tree so that the capacity is never less than the incoming traffic. It's quite expensive and because of this reason some over subscription can be accepted.

VLAN

Now, the problem is that every switch can be connected to each other and so there is no more LANs separation in the datacenter, every packet can go wherever it wants and some problems may appear. VLANs solve this problem partitioning a broadcast domain and creating isolated computer networks.

A virtual LAN (VLAN) is a virtual network consisting of virtual and/or physical switches, which divides a LAN into smaller logical segments. A VLAN groups the nodes with a common set of functional requirements, independent of the physical location of the nodes. In a multi-tenant cloud environment, the provider typically creates and assigns a separate VLAN to each consumer. This provides a private network and IP address space to a consumer, and ensures isolation from the network traffic of other consumers.

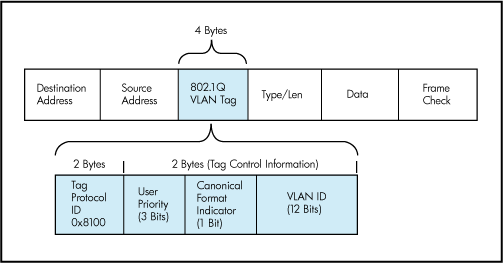

It works by applying tags (from 1 to 4094) to network packets (in Ethernet frame) and handling these tags in the networking systems.

A switch can be configured to accept some tags on some ports and some other tags on some other ports.

VLAN are useful to manage the access control to some resources (and avoid to access to some subnetwork from other subnetwork). Different VLANs are usually used for different purposes.

Switch Anatomy

A switch is an ASIC (Application-Specific Integrated Circuit). It can be proprietary architecture or non-proprietary. There are two type of switches: Layer 2 and Layer 3 switches. The main difference is the routing function: A Layer 2 switch only deals with MAC addresses, while a Layer 3 switch also cares about IP addresses and manages VLAN and Intra-VLAN communications. In both layers there is no loop problem.

Datacenter's switches are usually non-blocking. It basically means that this switches have the forwarding capacity that supports concurrently all ports at full capacity.

Now some standard are trying to impose a common structure to the network elements (switch included) to facilitate the creation of standard orchestration and automation tools.

The internal is made of a control plane which is configurable and a data plane where there are the ports and where the actual switching is made. The control plane evolved during the years, now they run an OS and Intel CPU's. Through a CLI Command Line Interface it's possible to configure the control plane. Some examples of command are:

- show running config

- show interfaces status

- show vlan

- config (to enter in config mode)

Some protocols in the switch (bold ones are important):

- PING to test connectivity.

- LLDP Local Link Discovery Protocol ( a way to explore the graph).

- STP Spanning Tree Protocol (to avoid loops).

- RSTP Rapid-STP

- DCBX Data Center Bridging eExchange (QoS, priority)

- PFC Priority Flow Control

- ETS Enhanced Transmission Selection (priority)

- LACP Link Aggregation Control Protocol (use two wires as they are one).

ONIE (Open Networking Installed Environment) boot loader

The switch has a firmware and two slots for the OS images. When updating in the first slot we store the old OS image, in the second slot the new one.

NFV Network Functions Virtualization (5G mostly NFV based)

The data plane is connected to a DC's VM which acts as a control plane.

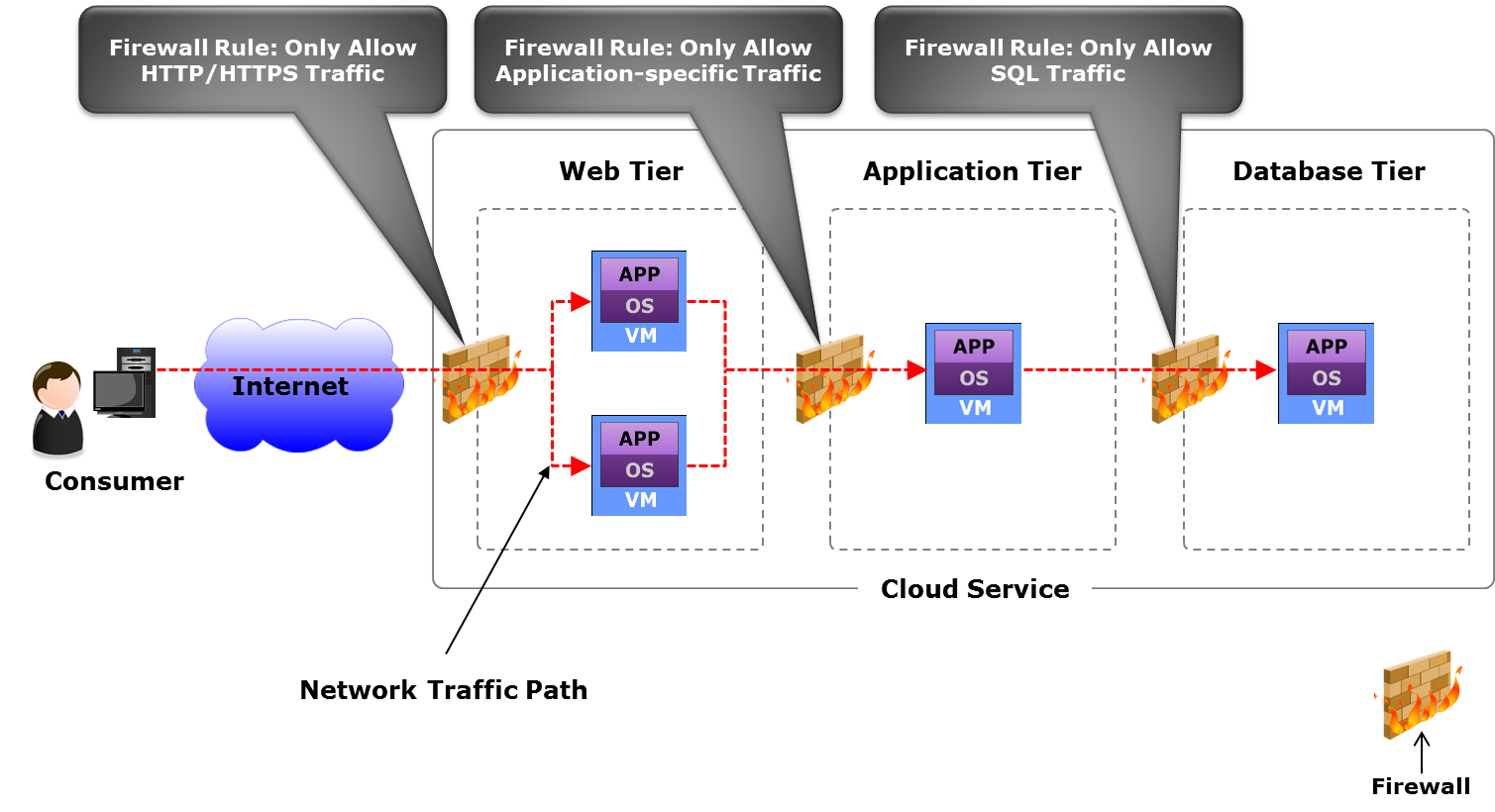

Network topology with firewalls

A Firewall can only perform security check on a flow, but cannot manage the flow itself. Furthermore, is not possible to let pass the entire traffic through the Firewall, because it would be a bottleneck. For that reason, after the security checks the firewall divert the flow directly to router and switches thanks to OpenFlow API.

Disks and Storage

IOPS: Input/output operations per second is an input/output performance measurement used to characterize computer storage devices (associated with an access pattern: random or sequential).

System Bus Interfaces

- SATA: with controller, slow because it is the bottleneck

- SAS (Serial Attached SCSI)

- NVMe (Non Volatile Memory express): controller-less, protocol used over PCI express bus

- ...

Redundancy

RAID stands for Redundant Array of Independent Disks. The RAID is done by the disk controller or the OS.

The more common RAID configurations are:

- RAID-0: striping, two drivers aggregated that works as a single one (no fault tolerance)

- RAID-1: mirroring, write on both the drives, one is the copy of the other.

- RAID-5: block-level striping with distributed parity. It's xor based: the first bit goes in the first disk, the second bit in the second one and their xor in the third. If one disk crashes I can recompute its content with the other two (for each two bits of info I need one extra bit, so one third more disk storage). This means mirroring with only 50% more space.

- RAID-6: block-level striping with double distributed parity. Similar to RAID1 but with more disks.

Memory Hierarchy

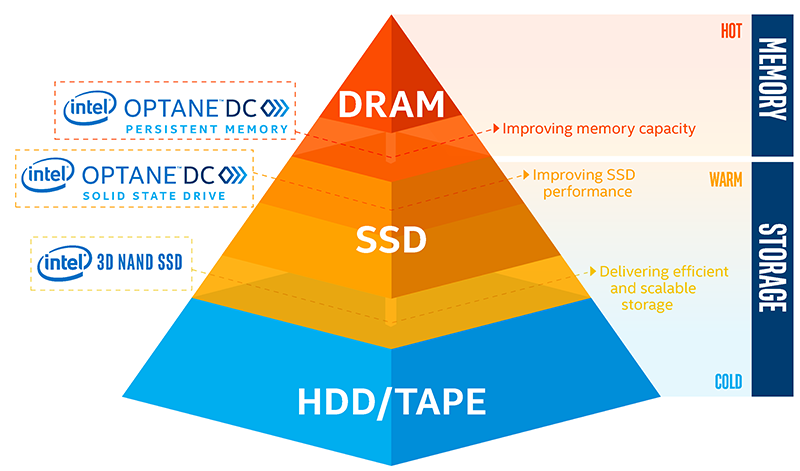

Tiering is a technology that categorizes data to choose different type of storage media to reduce the total storage cost. Tiered storage policies place the most frequently accessed data on the highest performing storage. Rarely accessed data goes on low-performance, cheaper storage.

Caches:

- CPU Registries

- CPU Cache

Memory tiering:

- RAM

- nvRAM (uses nvDIMM)

Storage tiering:

- SSD Memory

- Hard drive

- Tape

NVMe

It's a protocol on the PCI-express bus and it's totally controller-less. From the software side it's simpler in this way to talk with the disk because the driver is directly attached to the PCI, there is no controller and minor latency.

A bus is a component where I can attach different devices. It has a clock and some lanes (16 in PCI, ~15 GBps because each lane is slightly less then 1 GB). Four drives are enough to exhaust a full PCI v3 bus. They are also capable of saturating a 100 Gbps link, since a NVMe SSD has a bandwidth of 3.5 GBps (3.5*4 = 14 GBps => almost filled the 15 GBps of the PCI-e).

NVMe has now almost totally replaced SATA, since the latter uses 2 PCIe lines and for that reasons represents the bottleneck considering the actual SSD speed.

Furthermore, NVMe is often uses in the lower memory tier of the RAM: its speed is only one order of magnitude less than RAM, but can have a very big size without any problem. For that reason represent a valid super-fast cache level for the RAM and them started being associated in one single level to implement a big RAM tier, in a totally transparent way for the system.

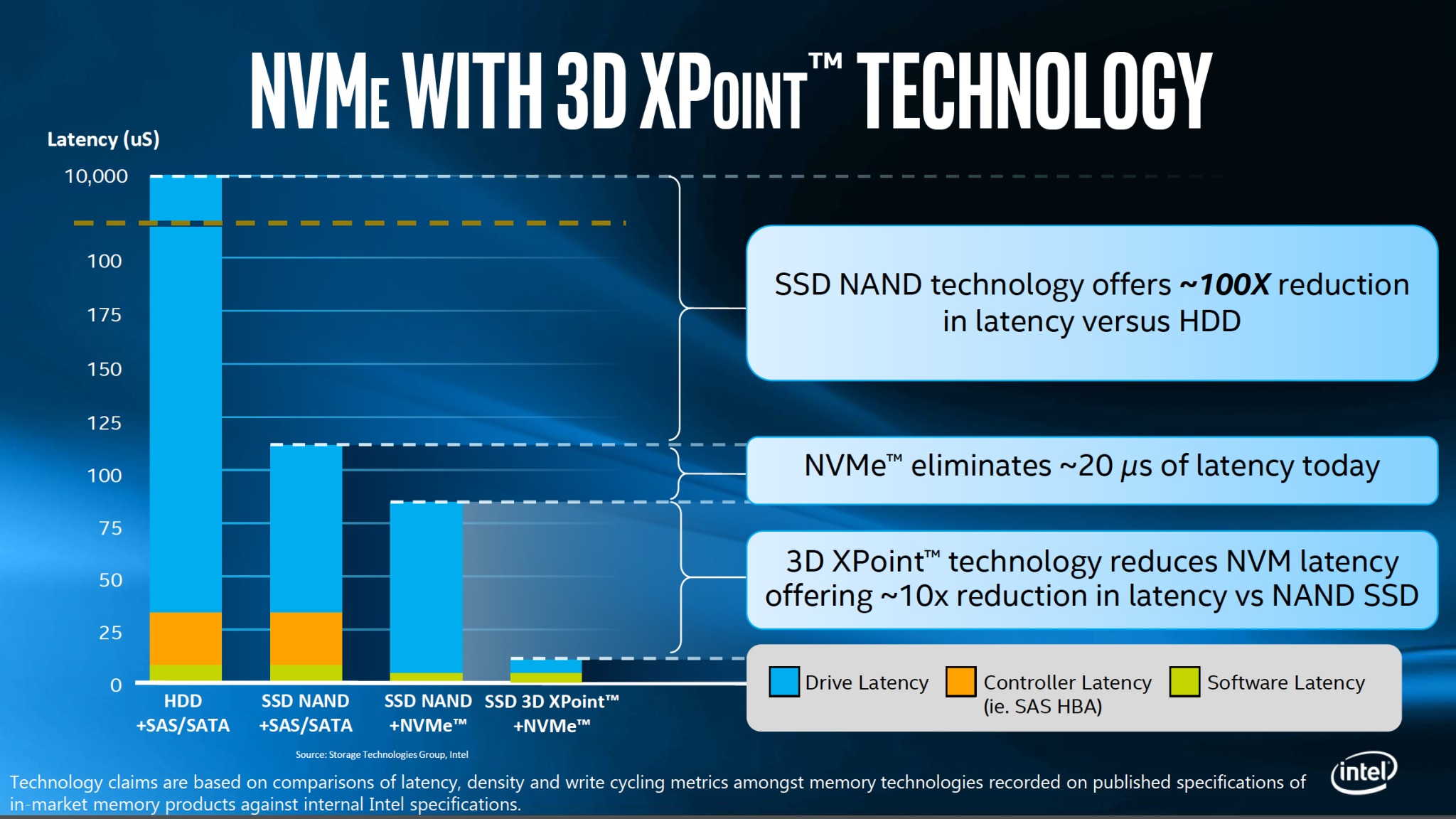

Since the software latency in disk IOs is 5 microseconds more or less, TCP/IP software introduces also a latency of 70-80 microseconds, the disk is no more a problem. Indeed, the problem is now the network, not only for the latency, but also for the bandwidth: 4 NVMe totally saturates a 100 Gbps network.

nvDIMM

nvDIMM (non volatile Dual Inline Memory Module) is used to save energy. It allows to change the amount of current given to each line, that is as much as a SSD needs to write.

The memory power consumption is a problem, because it usually consume more current than the CPU; moreover the RAM to persists after a reboot needs to be battery-powered, that is very expensive.

With the advent of SSD and NVMe things changed, since we reach high speed with persistent memory: non-volatile memory does not need power unless the need of performing I/O operations; moreover data does not need to be refreshed periodically to avoid data loss.

nvDIMM allows to put SSDs on the memory BUS as for the RAM instead of the PCIe as for the storage.

Misc

- Processes can share memory through the memory mapping technique (the memory is seen as a file).

- Beside Volatile RAM it's now possible to have persistent state RAM.

- With Intel Optane storage is only 35% slower then the RAM, so there is the need for supporting large non volatile memory tier with super fast access.

Storage aggregation

Actually, the Hard Drive problem is not the speed but the latency. With a large bandwidth HDD are fast on contiguous data, but have a high latency on sparse data, on which are very slow.

Latency is due to:

- software (Filesystem, OS, ..): in a microseconds order, cannot be removed

- controller: can be reduced, e.g. with NVMe is just 20µs

- HDD latency: can drastically be reduced with SSD, and is even smaller with 3D NAND

This problems are solved with the storage aggregation technique, that is a strategy for accessing drives in parallel instead of sequentially.

It is the concept of splitting data between various disks and then "picture" the whole system as a sole huge drive (concept of resource pooling in cloud computing)

The strategy for accessing drive makes the difference.

Fiber channel is the kind of fabric dedicated for the storage. The link coming from the storage ends up in the Host Based Adapter in the server.

Storage system architectures

Storage system architectures are based on data access methods whose common variants are:

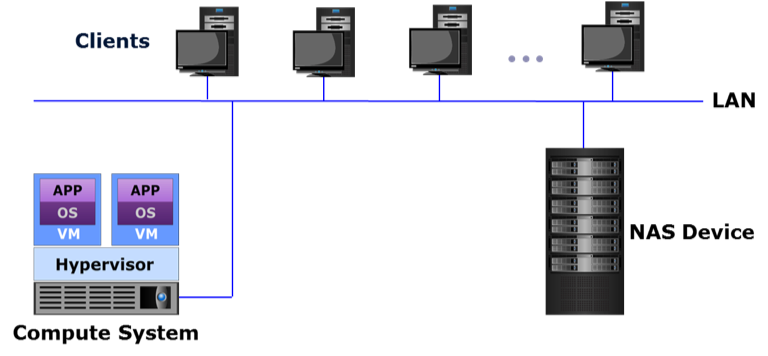

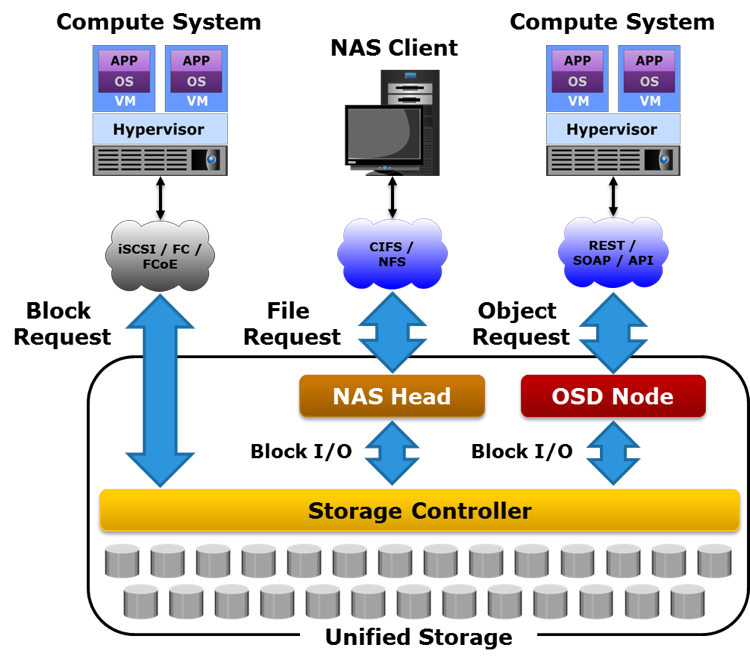

- file-based (NAS): a file-based storage system, also known as Network-Attached Storage (NAS), is a dedicated, high-performance file server having either integrated storage or connected to external storage. NAS enables clients to share files over an IP network. NAS supports NFS and CIFS protocols to give both UNIX and Windows clients the ability to share the same files using appropriate access and locking mechanisms. NAS systems have integrated hardware and software components, including a processor, memory, NICs, ports to connect and manage physical disk resources, an OS optimized for file serving, and file sharing protocols.

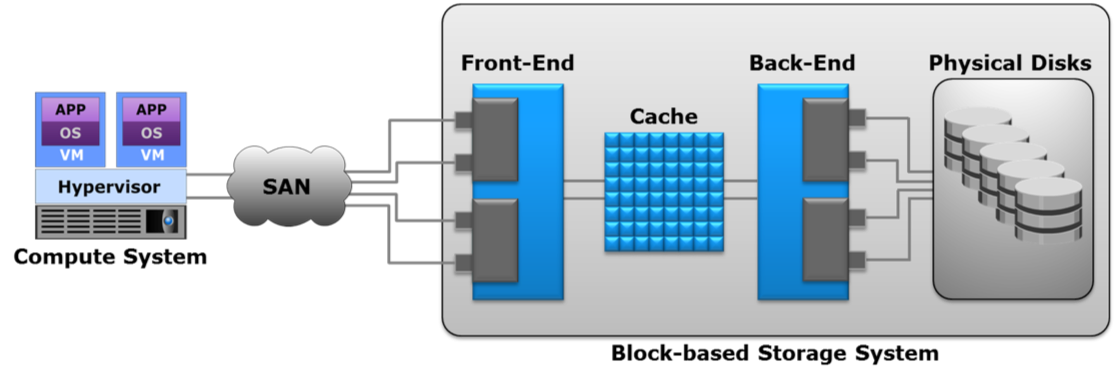

- block-based (SAN): a block-based storage system enables the creation and assignment of storage volumes to compute systems. The compute OS (or hypervisor) discovers these storage volumes as local drives. A file system can be created on these storage volumes, for example NTFS in a Windows environment, which can then be formatted and used by applications.

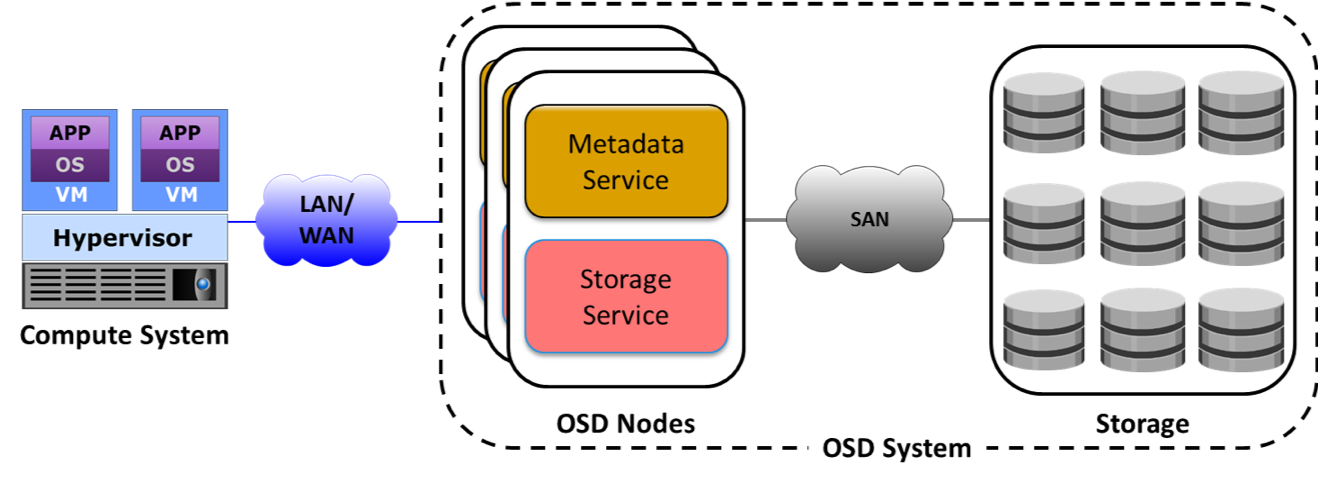

- object-based: object-based storage is a way to store file data in the form of objects based on the content and other attributes of the data rather than the name and location of the file. An object contains user data, related metadata (size, date, ownership, etc.), and user defined attributes of data (retention, access pattern, and other business-relevant attributes). The additional metadata or attributes enable optimized search, retention and deletion of objects. The object-based storage system uses a flat, non-hierarchical address space to store data, providing the flexibility to scale massively. Cloud service providers leverage object-based storage systems to offer Storage as a Service because of its inherent security, scalability, and automated data management capabilities. Object-based storage systems support web service access via REST and SOAP. Eg. AWS S3.

- unified: unified storage or multi-protocol storage has emerged as a solution that consolidates block, file, and object-based access within one storage platform. It supports multiple protocols such as CIFS, NFS, iSCSI, FC, FCoE, REST, and SOAP for data access

iSCASI: Internet Small Computer Systems Interface, an IP-based storage networking standard for linking data storage facilities. It provides block-level access to storage devices by carrying SCSI commands over a TCP/IP network.

Network Area/Attached Storage (NAS)

NAS is a file-level computer data storage server connected to a computer network providing data access to a heterogeneous group of clients. NAS systems are networked appliances which contain one or more storage drives, often arranged into logical, redundant storage containers or RAID. They typically provide access to files using network file sharing protocols such as NFS, SMB/CIFS, or AFP over a optical fiber.

Basically the whole storage is exposed as a file system. When using a network file system protocol, you are using a NAS.

Storage Area Network (SAN)

A network of compute systems and storage systems is called a Storage Area Network (SAN). A SAN enables the compute systems to access and share storage systems. Sharing improves the utilization of the storage systems. Using a SAN facilitates centralizing storage management, which in turn simplifies and potentially standardizes the management effort.

SANs are classified based on protocols they support.

Common SAN deployments types are Fibre Channel SAN (FC SAN), Internet Protocol SAN (IP SAN), and Fibre Channel over Ethernet SAN (FCoE SAN), ATA over Ethernet (AoE) adn HyperSCSI. It can be implemented as some controllers attached to some JBoDS (Just a Bunch of Disks).

While NAS provides both storage and a file system, SAN provides only block-based storage and leaves file system concerns on the "client" side. However, note that a NAS can be part of a SAN network.

The SAN can be divided in different Logical Unit Numbers (LUNs). The LUN abstracts the identity and internal functions of storage systems and appear as physical storage to the compute system.

- Storage capacity of a LUN can be dynamically expanded or reduced (virtual storage provisioning: It enables to present a LUN to an application with more capacity than is physically allocated to it on the storage system.)

- LUN can be created from

- RAID set (traditional approach): suited for applications that require predictable performance

- Storage pool: LUNs can be created from the storage pool that comprises a set of physical drives that provide the actual physical storage used by the volumes. Appropriate for applications that can tolerate performance variations.

If the drive is seen as physically attached to the machine, and a block transmission protocol is adopted that means that you are using a SAN. The optical fiber has become the bottleneck (just four drives to saturate a link).

With SAN the server has the impression that the LUN is attached directly to him, locally; with NAS there isn't this kind of abstraction.

HCI - Hyperconvergent Systems

- Nutanix: is the current leader of this technology

- Ceph: is a different architecture/approach

- vSAN

- SSD - Storage Spaces Direct

This kind of software is expensive (Nutanix HCI is fully software defined so you do not depend on the vendors hardware).

The main idea is not to design three different systems (compute, networking, storage) and then connect them, but it's better to have a bit of them in each server I deploy. "Adding servers adds capacity".

The software works with the cooperations of different controller (VMs) in each node (server). The controller (VM) implements the storage abstraction through the node and it implements also the logical moving of data. Every write keeps a copy on the local server storage exploiting the PCI bus and avoiding the network cap; a copy of the data is given to the controller of another node. The read is performed locally gaining high performances. The VM is aware that there are two copies of the data so it can exploit this fact. Once a drive fails its copy is used to make another copy of the data. The write operation is a little bit slower since I need to wait for the 'ack' of the controller in order to keep replicas of the written data on other nodes (sync replica).

SDS - Software Defined Storage

Software-defined Storage is a term for computer data storage software for policy-based provisioning and management of data storage independent of the underlying hardware. This type of software includes a storage virtualization to separate storage hardware from the software that manages it.

It's used to build a distributed system that provides storage services. Uses object-based storage architecture (objectID, metadata, binary data).

Non-RAID drive architectures

Also other architectures exist and are used when RAID is too expensive or not required.

- JBOD ("just a bunch of disks"): multiple hard disk drives operated as individual independent hard disk drives

- SPAN: A method of combining free space on multiple hard disk drives from "JBoD" to create a spanned volume

- DAS (Direct-attached storage): a digital storage directly attached to the computer accessing it.

Some consideration about Flash Drives

The bottleneck in new drives is the connector. The SATA connector is too slow to use SSD at the maximum speed. Some results can be see here.

The solution? Delete the connector and attach it to PCIe. So new Specification is used, the NVMe, an open logical device interface specification for accessing non-volatile storage media attached via a PCI Express bus.

Storage in the future

Click to show or hide

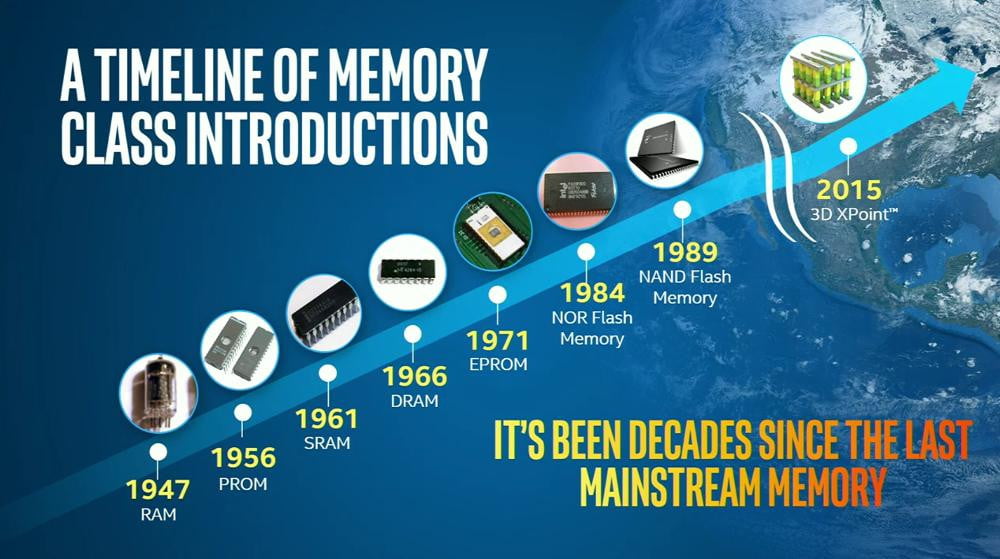

As we can see in the image, it's been decades since the last mainstream memory update is done. In fact, the SSD became popular in the last years due the cost but they exists since 1989.

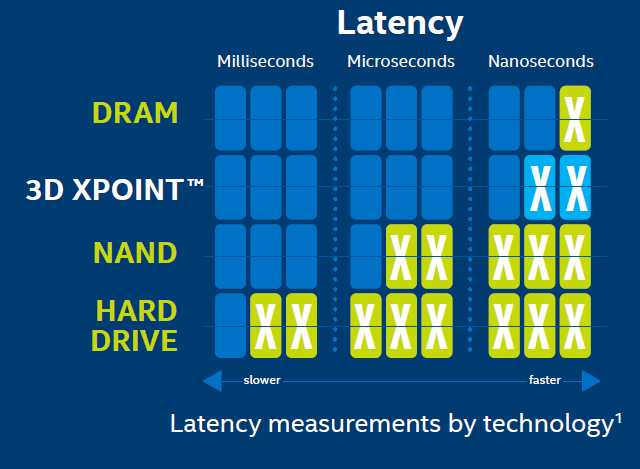

New technology was introduced in 2015, the 3D XPoint. This improvement takes ICT world in a new phase? If yesterday our problem was the disk latency, so we design all algorithm to reduce IOs operation, now the disk is almost fast as the DRAM, as shown the following image:

Servers

They are really different from desktops, the only common part is the CPU instruction set.

For instance, servers have an ECC memory with Error Correction Code built in.

Racks are divided in Units: 1 U is the minimal size you can allocate on a rack. Generally 2 meters rack has 42 Units.

Types of compute systems

-

Rack-mounted: a rack-mounted compute system is a compute system designed to be fixed on a frame called a “rack”. A rack is a standardized enclosure containing multiple mounting slots, each of which holds a server. A single rack contains multiple servers stacked vertically, thereby simplifying network cabling, consolidating network equipment, and reducing floor space use. Each rack server has its own power supply and cooling unit. A “rack unit” (denoted by U or RU) is a unit of measure of the height of a server designed to be mounted on a rack. One rack unit is 1.75 inches (~4.5cm). A rack server is typically 19 inches (~50cm) in width and 1.75 inches (~45cm) in height. This is called a 1U rack server. Other common sizes of rack servers are 2U and 4U. Some common rack cabinet sizes are 27U, 37U, and 42U. Typically, a console with a video screen, keyboard, and mouse is mounted on a rack to enable administrators to manage the servers in the rack. Some concerns with rack servers are that they are cumbersome to work with, and they generate a lot of heat because of which more cooling is required, which in turn increases power costs.

-

Blade: a blade compute system, also known as a blade server, contains only core processing components, such as processor(s), memory, integrated network controllers, storage drive, and essential I/O cards and ports. Each blade server is a self-contained compute system and is typically dedicated to a single application. A blade server is housed in a slot inside a chassis, which holds multiple blades and provides integrated power supply, cooling, networking, and management functions. The blade enclosure enables interconnection of the blades through a high speed bus and also provides connectivity to external storage systems. The modular design of blade servers makes them smaller, which minimizes floor space requirements, increases compute system density and scalability, and provides better energy efficiency as compared to tower and rack servers.

Form-factors

In a standard 1U (aka Pizza Box), the bottom part is composed by

- 2 power plugs

- networking plugs for KVM (configuration console)

- BMC (Base Management Console) which is a stand alone OS talking with the motherboard used for remote monitoring (eg. shut down)

while the front (up) part contains:

- drives

- immediately above them there are the fans

- and the disk controller.

Typically the max number of CPUs is four and they are close to the memory modules.

- 1U Pizza box: 2 CPUs, ~10 drives disposed horizontally.

- 2U: 2 CPUs, 24 drives disposed vertically.

- 2U Twin square: 24 drives on the front disposed vertically, 4 servers 2 CPUs each, they share only the power.

- 10U Blade server: big chassis, up to 16 servers 2 CPUs each, simpler cabling, easy management and cost reduced.

- Intel Ruler: up to 1 petabyte but there is no room for CPU because it is a SS media. Possible to design a one half PB ruler with room for CPUs.

Differs from desktop systems.

-

CPU architecture with a new generation memory called NUMA (Non Uniform Memory Architecture).

- Drop the assumption that all the RAMs are equal. NUMA is supported in the most used servers and virtualizer. Create threads and process that are NUMA aware: split data in an array and each thread works on a part of it. APIs are provided in order to access specific memory zones in a NUMA architecture.

-

-

Hyper-threading makes a single processor core appear as two logical processor cores, allowing the hyper-threading enabled operating system (or hypervisor) to schedule two threads simultaneously to avoid idle time on processor. However, the two threads cannot be executed at the same time because the two logical cores share the resources of a single physical core. When core resources are not in use by the current thread, especially when the processor is stalled (for example due to data dependency), resources of the core are used to execute the next scheduled thread.

In this case emerges a problem of memory condivision, and the solutions are usually:- One cache per core

- One cache per couple of cores

- A shared RAM between some cores (Multi channel D-RAM: more bandwidth than DDR)

If I have two threads in many cases I can execute 2 istruction at time (thread overlapping, hyper threading).

-

-

Inter socket and Intra socket connection:

- initially cores used a token ring or two token rings, now they use a mash.

- Crossbar interconnection (each CPU at the vertex of a square connected by the edges and the diagonals too) between CPU's to reduce 1 hop.

-

Intel AVX CPU architecture

-

MCDRAM (multi channel RAM) with less latency

Misc

Trade-off in CPU design: high frequency, low cores. All depends on the application running: it can benefit from high frequency or not (big data systems are more about capacity than latency).

Latency is slightly higher when I access a RAM bank of another socket because I have to ask for it via a bus that interconnects them (UPI in an Intel CPU).

Inside the core there are some funtional units like: branch missprediction unit, FMA (Floating point Multiply Add). Each core has a dedicated cache at L1 and a shared cache at L2.

SMART technology in drives: predictive system in the drive that gives the probability that the drive will fail in the next hours. Used by the driver provider for statistics, usage patterns.

Cloud

Is a business model. The cloud is someone else's computer that you can use (paying) to execute your application with more reliable feature than your laptop (i.e. paying for doing tests on your app using the cloud infrastructure because you need more resources). The interaction to obtain the cloud resources should be "self service" for as much as possible.

When you program for the cloud you dont know where your process will be executed or where you data will be stored.

Cloud is a collection of network-accessible IT resources:

- consists of shared pools of hardware and software resources deployed in data centers

One of the main concept of cloud computing is the one of pooling, which means that a set of heterogeneous resources can be viewed as a whole big resource in order to provide reassignment capability and location independence (which means that the client cannot control where his data are, except for maybe the geographical area). Another important concept is the one of resource measurement. The cloud computing business model revolves around pricing and resource consumption, so the system must be able to monitor it.

Cloud computing benefits are:

- Agility

- Reduction of IT cost (CAPEX to OPEX)

- High Availability and fault tolerance

- Business Continuity

- Rapid development and testing

- No infrastructure management

There is a trade off between centralization (the bottleneck is the storage) and distribution (the bottleneck is the network).

Rapid Elasticity: consumers can adapt to variation in workloads and maintain required performance levels. This permits also to reduce costs avoiding the overprovisining.

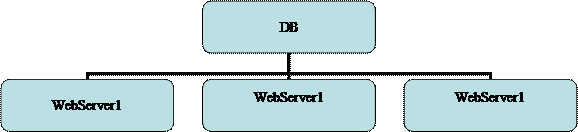

High Availability: the cloud provide high availability. This feature can be achieved with redundancy of resources to avoid system failure. Some Load Balancer is used to balance the request between all the resources to avoid failure due the resources saturation on some machine.

The cloud infrastructure can be public, if it is provisioned for open use by the general public; or private, if is provisioned for exclusive use by a single organization comprising multiple consumers.

Cloud computing Layers

The cloud infrastructure can be see as a layered infrastructure.

Cross functional layers

In the cloud computing reference model there are some sylos of cross layer functionalities, they mainly revolve around:

- Business Continuity: In data center failure is the norm, so I have to consider business continuity. It is reactive (disaster recovery) and proactive (risk assessment, backup, replicas...).

- Security: policies, standard procedures, firewalls, antivirus, intrusion detection/prevention.

- Service Management: Portfolio (SLA, roadmap, customer support...) and operation (monitoring, provisioning, compliance...)

Physical Layer

Comprises compute, storage and network resources and execute both provider and consumer software. The compute system can be shared between consumers or dedicated, typically providers use virtualisation and offer compute systems in the form of virtual machines. There are several software components deployed on compute systems:

- Self-service portal

- Platform and application software: PaaS and SaaS provided to the consumer

- Virtualisation software: useful for virtual resource pooling and creation

- Cloud management software: provider's software

- Consumer software

Compute system may be physically tower, rack or blade.

The storage system is the repository for saving and retrieving data. Cloud storage provides massive scalability and long-term data retention. Virtualisation is used by cloud providers to create storage pools shared among consumers.

Independently from storage devices there are several data access methods, as seen previously:

- Block-based

- File-based (NAS)

- Object-based

- Unified

The network system must be reliable and secure, it enables data transfer and sharing of IT resources between nodes across geographic regions. The network system enables several kinds of communications:

- Compute-to-compute : it is the interconnection of physical systems that, typically, use IP-based protocols. Compute systems access the network through NIC, physical switches and routers, they are common interconnecting devices.

- Compute-to-storage : it is typically a SAN (storage area network), they are classified on the communication protocol they support (fiber channel (FC), IP, fiber channel over ethernet (FCoE) etc.). SAN are divided in units called LUN (Logical Unit Number) that abstracts the identity and internal functions of storage systems and can be dynamically resized.

- FC SAN: SAN that uses fiber channel to transport data etc. Provides block-level access to storage and can connect approx. 15 million nodes with a speed up to 16 Gbps. FC implements zoning , a switch function that enables ports within a fabric to be logically divided into groups, each node can communicate with nodes within its group. It provides access control.

- IP SAN: SAN that uses the IP protocol to transport storage traffic. Since IP is a mature protocol, IP SAN can be built using an existing IP infrastructure. There are two main IP SAN protocols: iSCSI and FCIP: The first encapsulate SCSI command into IP packets and it is made by iSCSI targets and iSCSI initiators. The second protocol, FCIP, encapsulates FC frame into IP packets

- FCoE SAN: made by Converged Network Adapter (CNA), SOFTWARE adapter, FCoE switch and FCoE port.

- Inter-cloud: different cloud, even with different deployment model, are interconnected by WAN.

Virtual Layer

Deployed on the physical layer. Abstract physical resources, including storage and network, and makes them appear as virtual resources. Executes the requests generated by control layer. It permits a better use of the hardware when you have services that underuse it. With VMs there is a 10% of performance loss but we gain in flexibility and security.

Benefits of virtualization:

- optimize utilization of IT resources

- reduces cost and management complexity

- reduces deployment time

- increases flexibility

This allows a multi tenant environment since I can run multiple organizations VMs on the same server.

Key concept is virtualisation: it enables a single hardware resource to support multiple concurrent systems or vice versa. It is composed by 3 entities: virtualisation software, resource pool and virtual resources. Virtualisation refers to all the resources provided by the physical layer hence we have compute virtualisation, storage virtualisation and network virtualisation. Hypervisors enable compute virtualisation: it is a software that enables a physical compute system to run multiple OSs concurrently. For every OSs there is a virtual machine manager on top of the hypervisor kernel.

The network virtualisation software enables the creation of virtual LANs, virtual SANs and virtual switches. The storage virtualisation software allows the creation of virtual volumes, virtual disk files and virtual arrays.

The resource pool is an abstraction of aggregated computing resources such as processing power, memory capacity and network bandwidth. Cloud services obtain computing resources from resource pools. LUN in SAN are an example of storage pooling. Aggregation of virtualisation software and resource pool make a virtual machine, that like a physical system runs OS and applications.

VM files are managed using hypervisor's native file system or a shared file system that enables a VM to use NAS devices, for example. The VM console is an interface used to manage and monitor a VM and can be local or remote. It can be used also for configuration, reboot etc. VM template is a "standard first version" of a VM that can be specialised: every VM can be converted into a template.

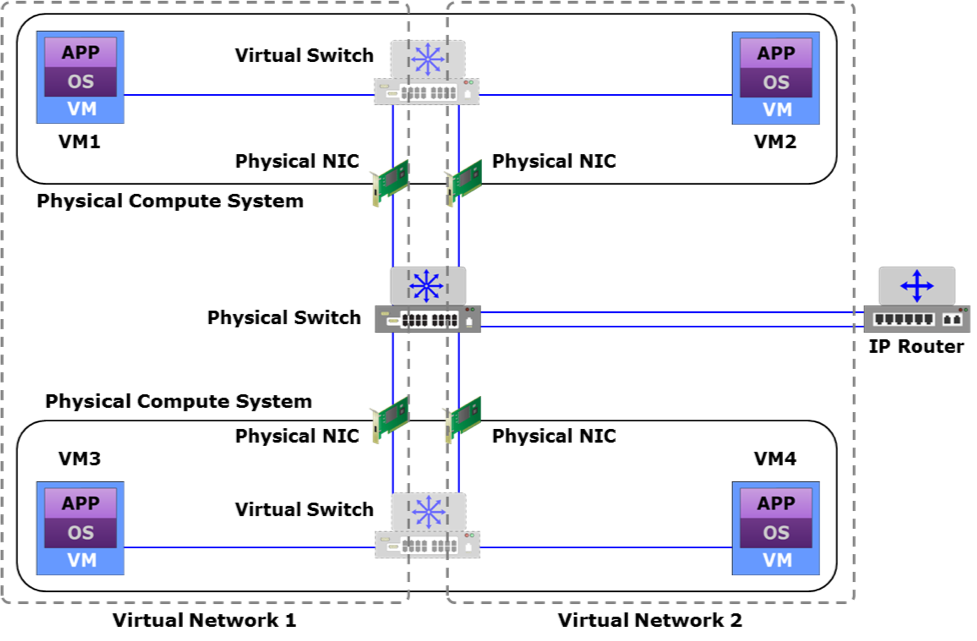

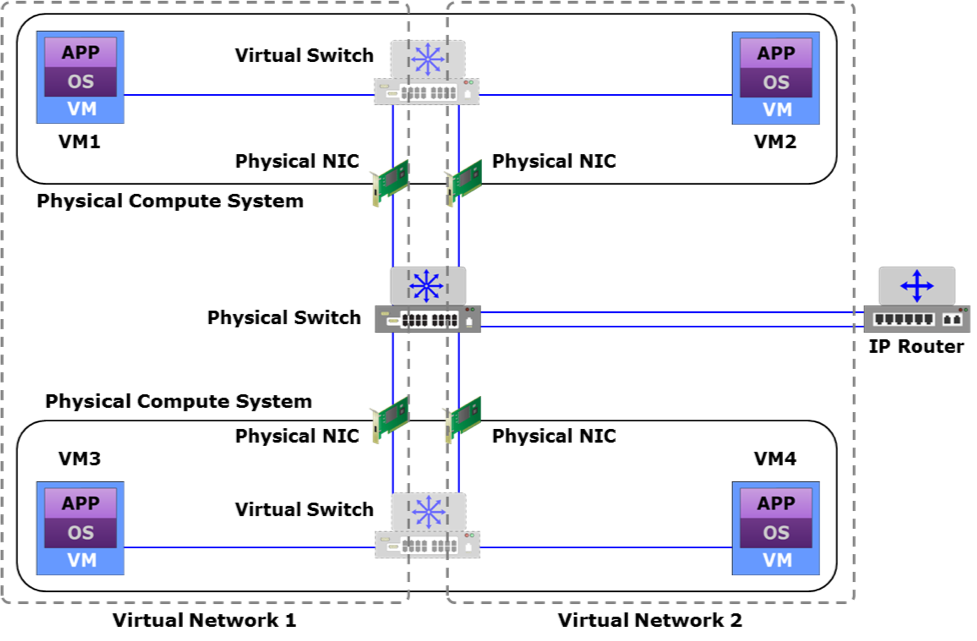

VM Network components

VM networks comprise virtual switches, virtual NICs, and uplink NICs that are created on a physical compute system running a hypervisor.

Click to expand

-

vSwitch: a virtual switch is a logical OSI Layer 2 Ethernet switch created within a compute system. A virtual switch is either internal or external. An internal virtual switch connects only the VMs on a compute system. It has no connection to any physical NIC and cannot forward traffic to a physical network. An external virtual switch connects the VMs on a compute system to each other and also to one or more physical NICs. A physical NIC already connected to a virtual switch cannot be attached to any other virtual switch.

-

vNIC: A virtual NIC connects a VM to a virtual switch and functions similar to a physical NIC. Virtual NICs send and receive VM traffic to and from the VM network. A VM can have one or more virtual NICs. Each virtual NIC has unique MAC and IP addresses and uses the Ethernet protocol exactly as a physical NIC does. The hypervisor generates the MAC addresses and allocates them to virtual NICs.

-

Uplink NIC: an uplink NIC is a physical NIC connected to the uplink port of a virtual switch and functions as an Inter-Switch Link between the virtual switch and a physical Ethernet switch. It is called uplink because it only provides a physical interface to connect a compute system to the network and is not addressable from the network. Uplink NICs are neither assigned an IP address nor are their built-in MAC addresses available to any compute system in the network. It simply forwards the VM traffic between the VM network and the external physical network without modification.

Virtual networks can be:

- VLAN: a virtual segregation of the broadcast domain of a LAN. The network infrastructure is shared but the devices belonging to a LAN are logically divided in groups.

- PVLAN : sub-VLAN that segregates nodes within a primary VLAN. It provides security between nodes on the same VLAN, simplifies network management and allows providers to support a larger number of consumers.

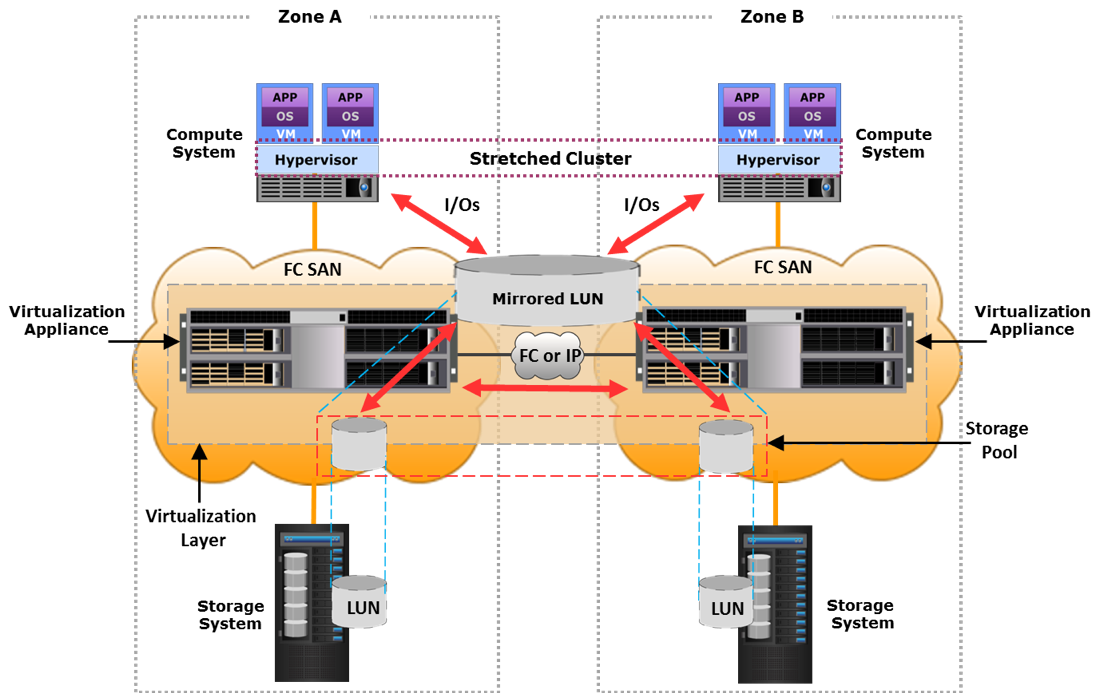

- Stretched VLAN : VLAN that spans multiple sites, enables the movement of VMs across locations without changing their network configs.

- VXLAN : overlay layer 2 network on top of a layer 3 network, it uses MAC in UDP encapsulation.

- VSAN : a logical fabric created over a physical FC or FCoE SAN enabling communication between nodes with a common set of requirements, independent of their physical location in the fabric.

There exists a mapping between VSAN and VLAN to determine which VLAN carries a VSAN traffic.

As anticipated LUNs are logical components of a SAN (storage pool), their capacity can be dynamically changed. LUNs created from a storage pool can be of 2 categories:

-

Thin LUN : at creation time only a portion of the physical storage is really allocated. Storage is consumed as needed in increments called LUN extents. Used when storage consumption is difficult to predict and when the above application layer can tolerate performance variations.

-

Thick LUN : storage completely allocated at creation time

VM components

The hypervisor is responsible for running multiple VMs. Since I want to execute x86 ISA over an x86 server I don't need to translate the code. An hypervisor permits to overbook physical resources to allocate more resources than exist and it also create also a virtual switch to distribute the networking over all VMs.

Hypervisors types:

- Bare-metal: directly installed on the hardware. It has direct access to the hardware resources of the compute system. Therefore, it is more efficient than a hosted hypervisor. However, this type of hypervisor may have limited device drivers built-in. Therefore, hardware certified by the hypervisor vendor is usually required to run bare-metal hypervisors. A bare-metal hypervisor is designed for enterprise data centers and cloud infrastructure.

- Hosted: installed as an application on an operating system. In this approach, the hypervisor does not have direct access to the hardware and all requests must pass through the operating system running on the physical compute system.

Types of virtualization

Types of virtualization:

- paravirtualization the virtual kernel cooperates with the hosting OS.

- the CPU is aware of the virtualization, it distinguishes the interrupts generated by the vOS.

- driver integration you don't have to emlulate all the drivers but you can ask the underlying OS for this service.

Virtual Machine (VM)

Each Virtual Machine is a set of discrete configuration files where there are the values aswering the questions: how much memory, how much disk, where is the disk file, how many CPU's cores. An example of those files are:

- configuration file: stores information, such as VM name, BIOS information, guest OS type, memory size

- virtual disk file: stores the contents of the VM's disk drive

- memory state file: stores the memory contents of a VM in a suspended state

- snapshot file: stores the VM settings and virtual disk of a VM

The disk is virtualized usign a file, while for the Network there is a VNIC (Network Interface Card) connected to a vSWITCH, comunicating with the physical NIC. The vNIC is used also by the real OS because it's physical NIC is busy doing the vSWITCH.

The Virtual CPU masks the feature of a CPU to a VM. The VCPU can be overbooked, up to twice the number of cores. The CPU has several rings of protection (user ... nested vos,vos,os).

vRAM ballooning

It's not allowed to use a virtual memory as vRAM because the sum of the vRAM should be less or equal to the actual RAM. Fragmentation could be a problem if there is lot of unused reserved memory. In order to achive this, a technique called ballooning has been introduced.

It is said to the VM: "Look, you have 1TB of RAM but most of it it's occupied". In this way we have dynamically expanding blocks of RAM: if the OS needs memory I can deflate the baloon by moving the occupancy threshold.

Docker

It exploits Linux's Resource Group. The processes in the container can see only a part of the OS. The containers have to share the networking. Docker separates different software stacks on a single node.

Control Layer

This layer includes software and tools useful for managing and controlling the underlying infrastructure. Control layer can be deployed on top of virtual or physical layer and it receives requests from service and orchestration layer: control layer provisions the required resources to fulfil these requests. Together with the virtualisation layer, the control layer provides a unified view of all the resources of the cloud infrastructure, enables resource pooling and dynamic allocation of resources.

There exist two types of control layer:

- Element manager : it can be built-in or externally provided and it comprises management tool for compute, network and storage resources because it is required to manage those components independently from each other. Between the tasks performed by the element manager, we find the initial configuration of OS, RAID, zoning, VLANs etc; dynamic expansion of existing resource pool with newly added resources; troubleshooting; performance and security monitoring.

- Unified manager : we can think to it as a layer mounted above an element manager. It provides a single management interface for the three components of the infrastructure (compute, network and storage). Like the element manger, the unified one provides a way to monitoring the resources and removing or adding some of them to an already provisioned service. More than this, the unified manager exposes APIs that can be used by an orchestration layer in order to automatize the service provisioning.

Another (new) approach to abstract the underlying infrastructure components is the Software Defined Approach. With this approach is possible to have an aggregated view of resources, it enables rapid provisioning and provides a mechanism to apply policies across the infrastructure. A software approach, like the unified manager, offers APIs to controllers enabling them to request and access resources like they were services. An approach like the one described improves business ability, brings to lower CAPEX (specialized hardware is not necessary) and provides a scale-out architecture.

The main duty of the Control Layer is the resource management and it can be relative or absolute. In the first case the resource allocation for a service is defined proportionally relative to the resource allocated to other service instances, in the second case the allocation is defined on the base of quantitative (lower and upper) bounds.

Every component has its resource management techniques.

- Compute resource management:

- Hyper-threading : every physical core is split into two logical that share the same physical resources, in this way OSs can schedule two threads simultaneously (for each logical unit)

- Memory page sharing : memory pages are shared among VMs and redundant copies of memory pages are eliminated by the hypervisor. VMs can have private copies of pages.

- Dynamic memory allocation : every VM's OS has an agent that communicate with the hypervisor. With this communication unused pages can be released and allocated to another VM with scarce memory.

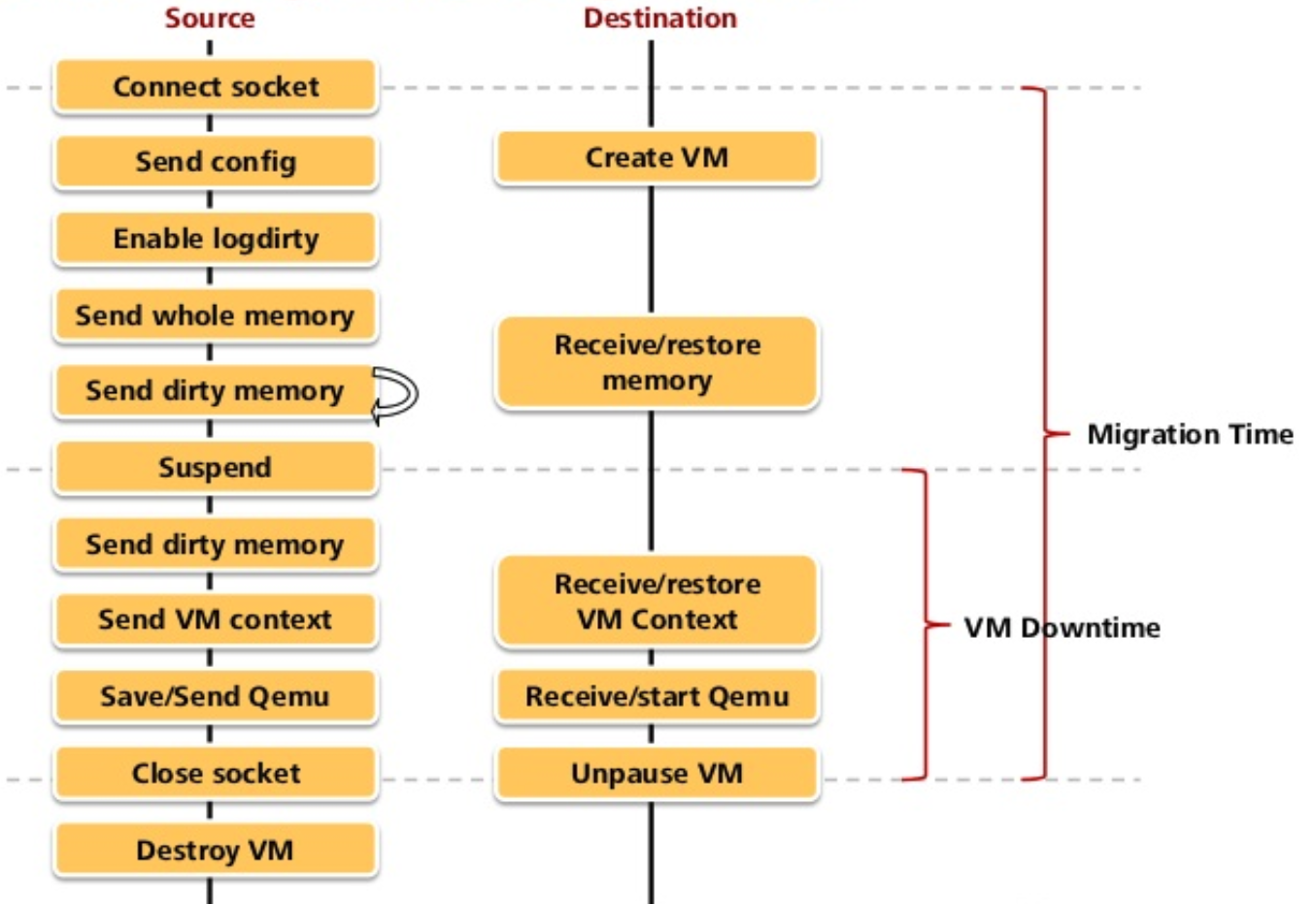

- VM load balancing across hypervisors : a management server monitors load across hypervisors and when a new VM is started it choose a hypervisor with enough resources. When the workload on a hypervisor exceed the resources available, the management server migrates the VM to an underutilized hypervisor.

- Server flash-cache technology : according to principles of locality and reuse, software is intelligently cached on flash storage. Is needed a warm-up time before appreciating a performance improvement

- Storage resource management:

- Virtual storage provisioning : using the thin LUNs, this mechanism is used to present a LUN to an application with more storage than effectively allocated. Physical storage will be allocated on demand, this simplify storage management and improve performance, reducing storage cost.

- Storage pool rebalancing : when storage devices are added to a storage pool, this technique automatically rebalance the data stored, for example performing striping and spreading data equally to all the drives of the pool.

- Storage space reclamation : unused space on thin LUNs is re-assigned to storage pool, providing cost savings. The free space can be discovered by zero-extent reclamation (recall: extents are blocks of storage that form a LUN) or can be communicated to the storage system using an API.

- Automated storage tiering : storage is divided into tier. Each tier is characterized by the storage type and the categories of data that stores. This allow to store data automatically into the right tier. Tiers can have different levels of protection and performance, hence cost.

- Cache tiering : allows to create large capacity cache aggregating SSDs and DRAMs forming tier, again characterized by the storage type.

- VM load balancing across storage volumes : it is like the load balancing of hypervisors but obviously this time is based on storage requirements of VMs. Load balancing is performed within pools of volumes aggregated as one.

- Network resource management :

- Balancing client workload across nodes : a load balancer is placed between internet and a cluster of nodes and it decide where to forward ingoing traffic.

- Network storm control : this technique prevents flooding of frames to create excessive traffic into a LAN or VLAN. Switches count frames and when reached a certain threshold they block traffic on ports.

- QoS : prioritization mechanism of traffic

- Traffic shaping : if traffic exceeds a certain threshold, switches commence to enqueue packets for a later transmission. Useful to avoid network congestion

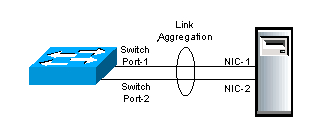

- Link aggregation : combines link between switches or switches and node with the aim to distribute traffic across the aggregation.

- NIC teaming : same of link aggregation but for network interface cards, provides also fault tolerance with network failover.

- Multipathing : provides load balancing of links and links failover, it improves I/O performance and data path utilization

Summarizing, control software:

- Enables resource configuration and resource pool configuration

- Enables resource provisioning

- Executes requests generated by service layer

- Exposes resources to and supports the service layer

- Collaborates with the virtualization software and enables:

- Resource pooling and creating virtual resources

- Dynamic allocation of resources

- Optimizing utilization of resources

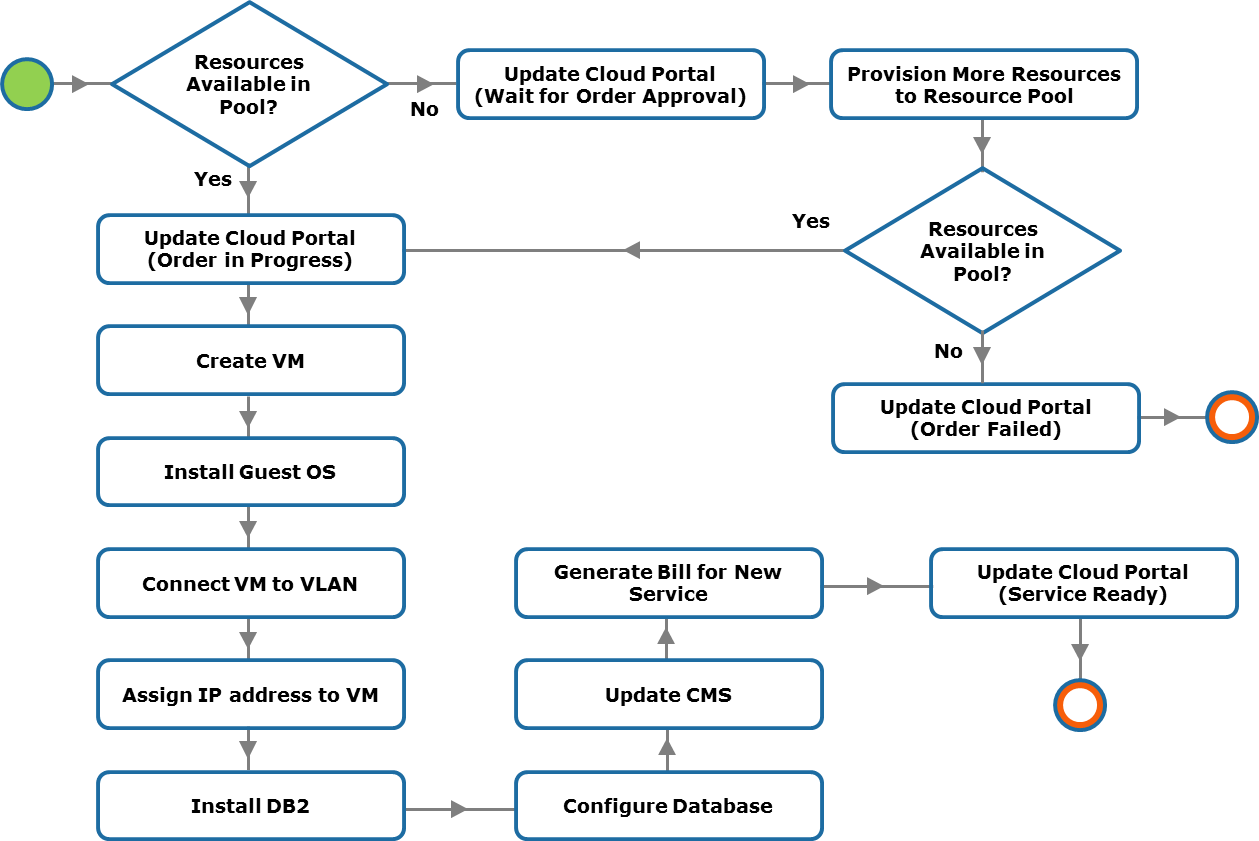

Key phases for provisioning resources

- Resource discovery: create an inventory of the infrastructure resources, so that unified manager can learn what resources are available for cloud service deployment

- Resource pool management: virtual resources such as VM, virtual volume, and virtual network are created from these pools and provisioned for the services

- Resource provisioning: involves allocating resources from graded resource pools to the service instances

Thin provisioning

This is a virtualization technology that gives the appearance of having more physical resources than are actually available. Thin provisioning allows space to be easily allocated to servers, on a just-enough and just-in-time basis. Thin provisioning is called "sparse volumes" in some contexts.

Open stack

A free and open-source software platform for cloud computing, mostly deployed as infrastructure-as-a-service (IaaS), whereby virtual servers and other resources are made available to customers.

Good idea but bad implementation. Various open source softwares, difficult to deply, lots of dead code, bad security implementation. It has a small form of orchestration but it's not a service orchestrator (i.e. no distribution of the workload, scaling)

Service layer

What is a cloud service?

Cloud services are IT resources that are packaged by the service providers and are offered to the consumers. Once constituent IT resources are provisioned and configured, a service is instantiated.

Service layer

The service layer has three key functions which are as follows:

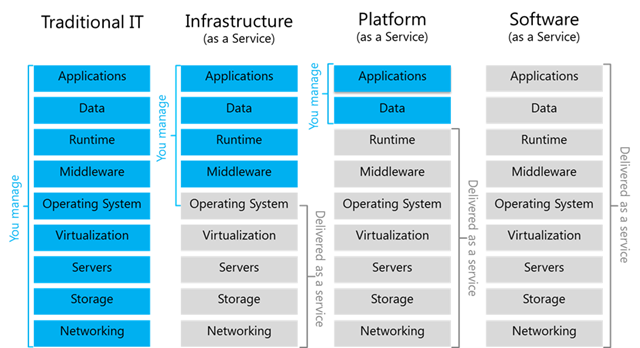

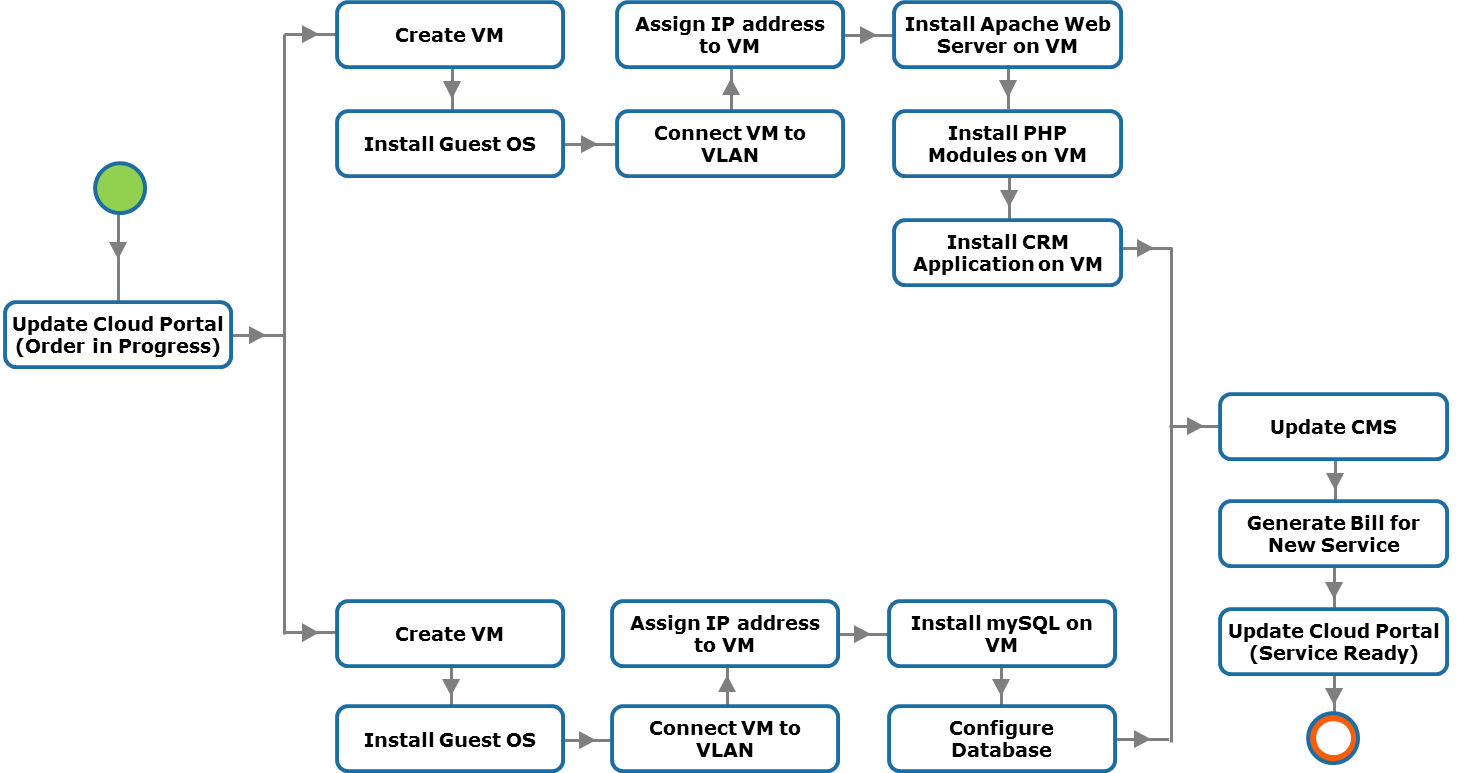

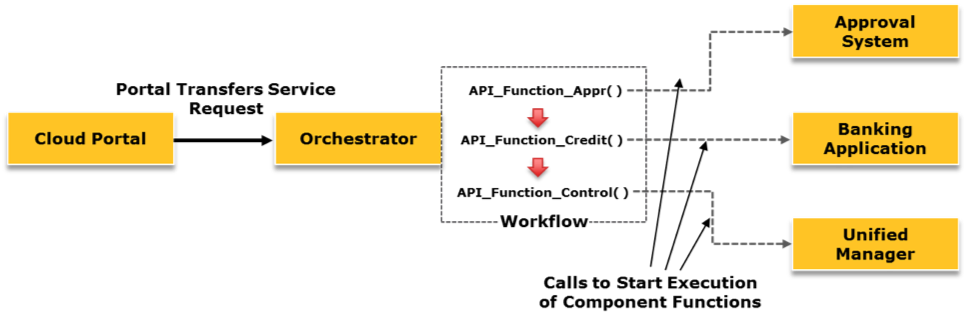

-